Documentation Index

Fetch the complete documentation index at: https://docs.orq.ai/llms.txt

Use this file to discover all available pages before exploring further.

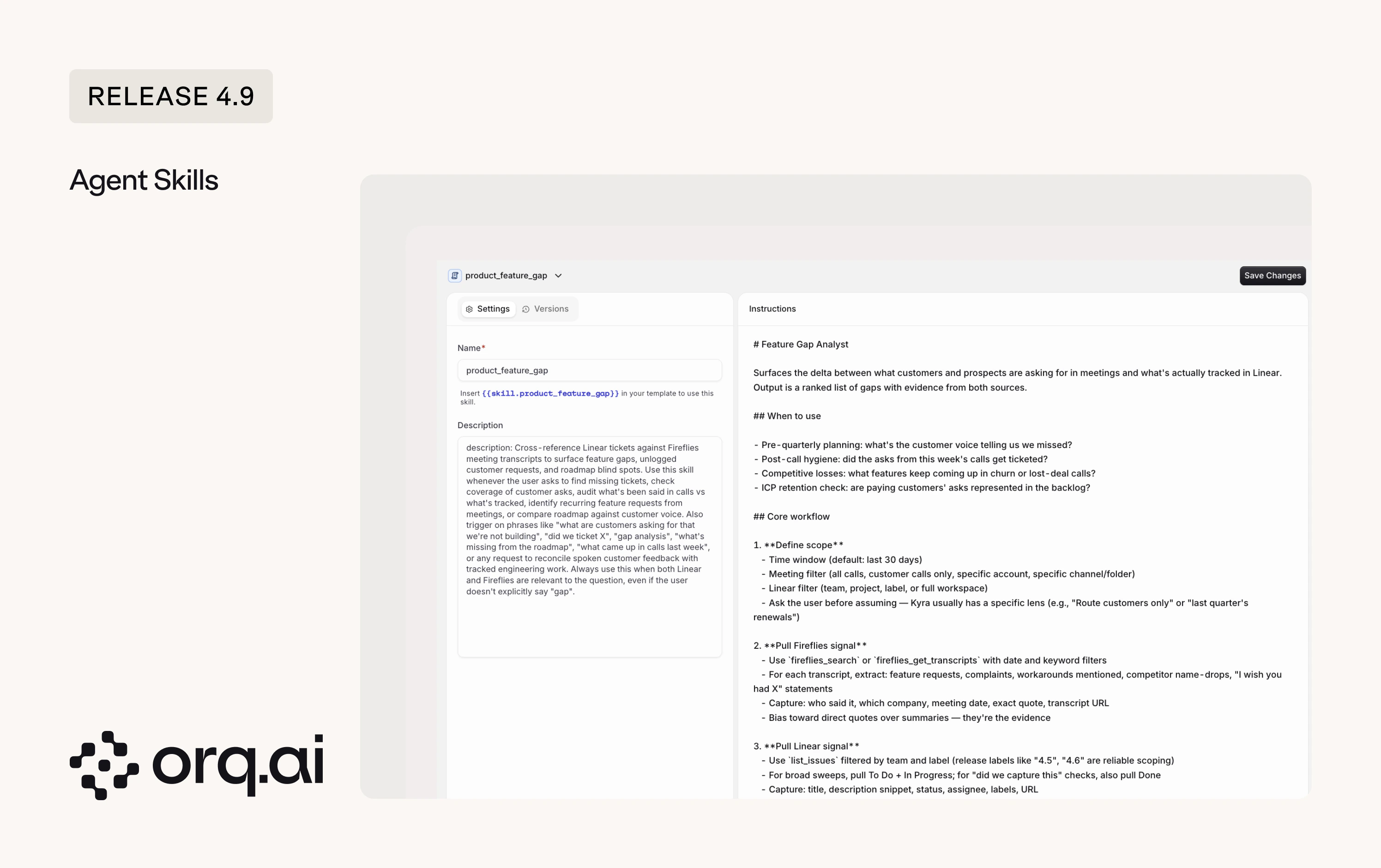

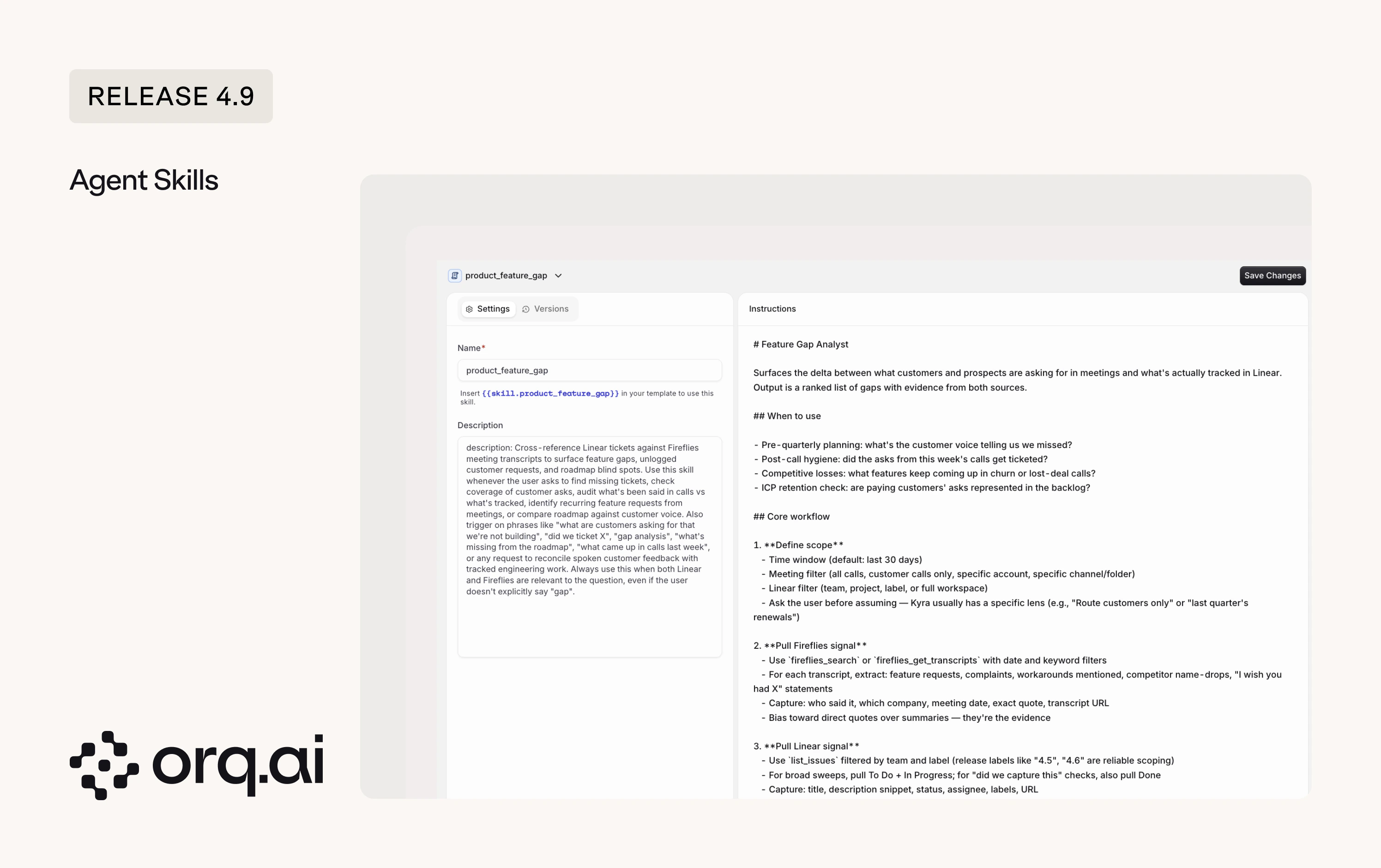

Agents are increasingly capable, but often lack the context they need to do real work reliably. Skills solve this by packaging context, tools, steps, and output format into one definition. Define a Skill once and reuse it across any Agent that needs it.

- On-demand invocation: Agents invoke attached Skills only when the conversation needs them, so the base Agent instructions stay small and you don’t pay tokens for capabilities the conversation never touches. Two examples:

- A

write-changelogSkill your docs-agent calls when a release ships, encoding section order, bullet format, and tone rules. - A

linear-feature-gapSkill that walks your product agent throughlist_initiatives, thenlist_issuesfiltered by afeature-requestlabel, and surfaces requests not tied to any initiative as gaps.

- A

- How the Agent picks a Skill: The Agent decides which Skill to invoke based on the Skill’s description and the Agent’s instructions, so both need to be clear and specific. You can also explicitly ask the Agent to use a Skill from the user input message.

Prompt Snippets are now Skills, and the change is backward compatible. Existing references keep working, so you don’t need to update existing Prompts, and you can reference Skills going forward the same way you referenced Prompt Snippets. Start with the Skills overview.

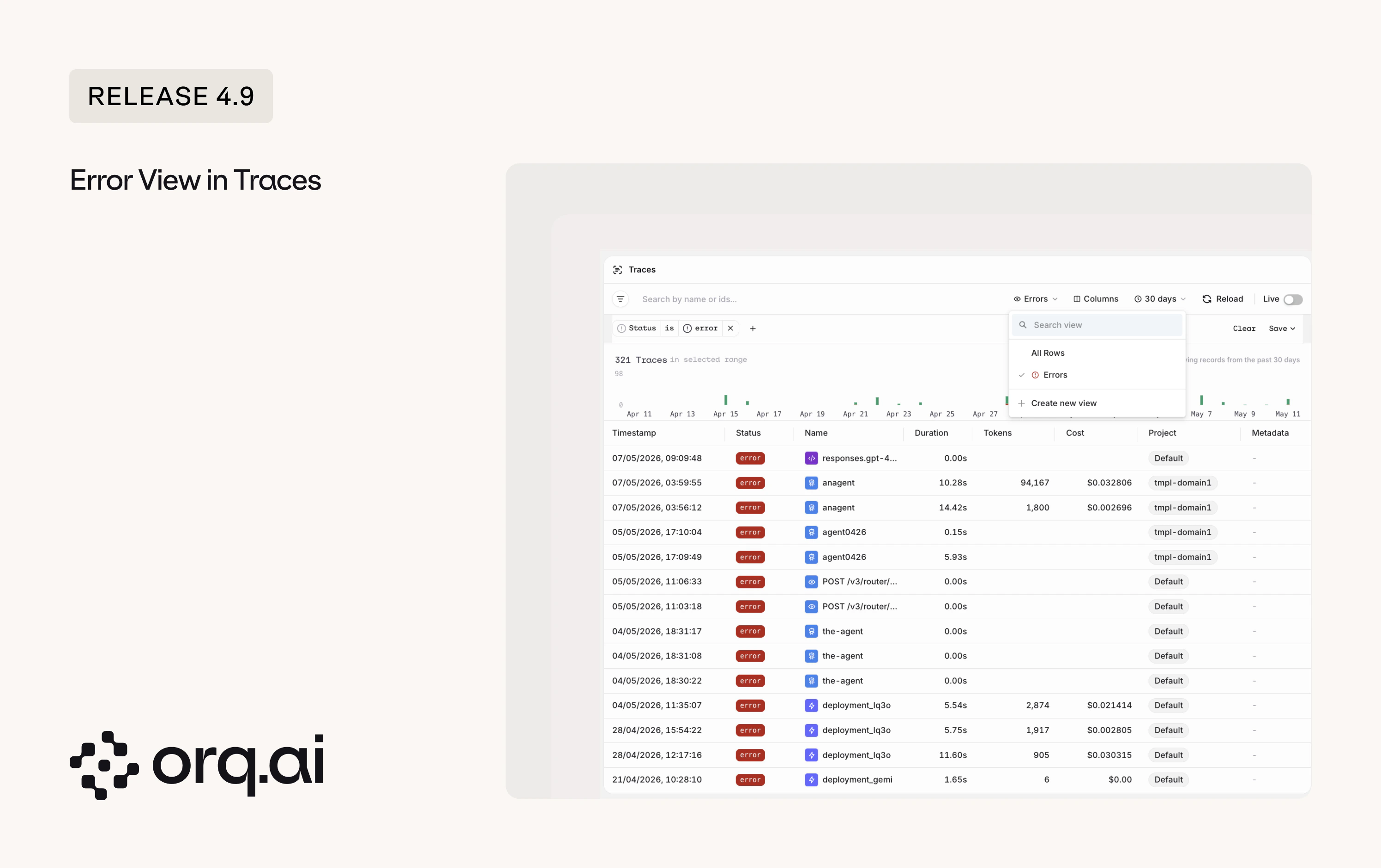

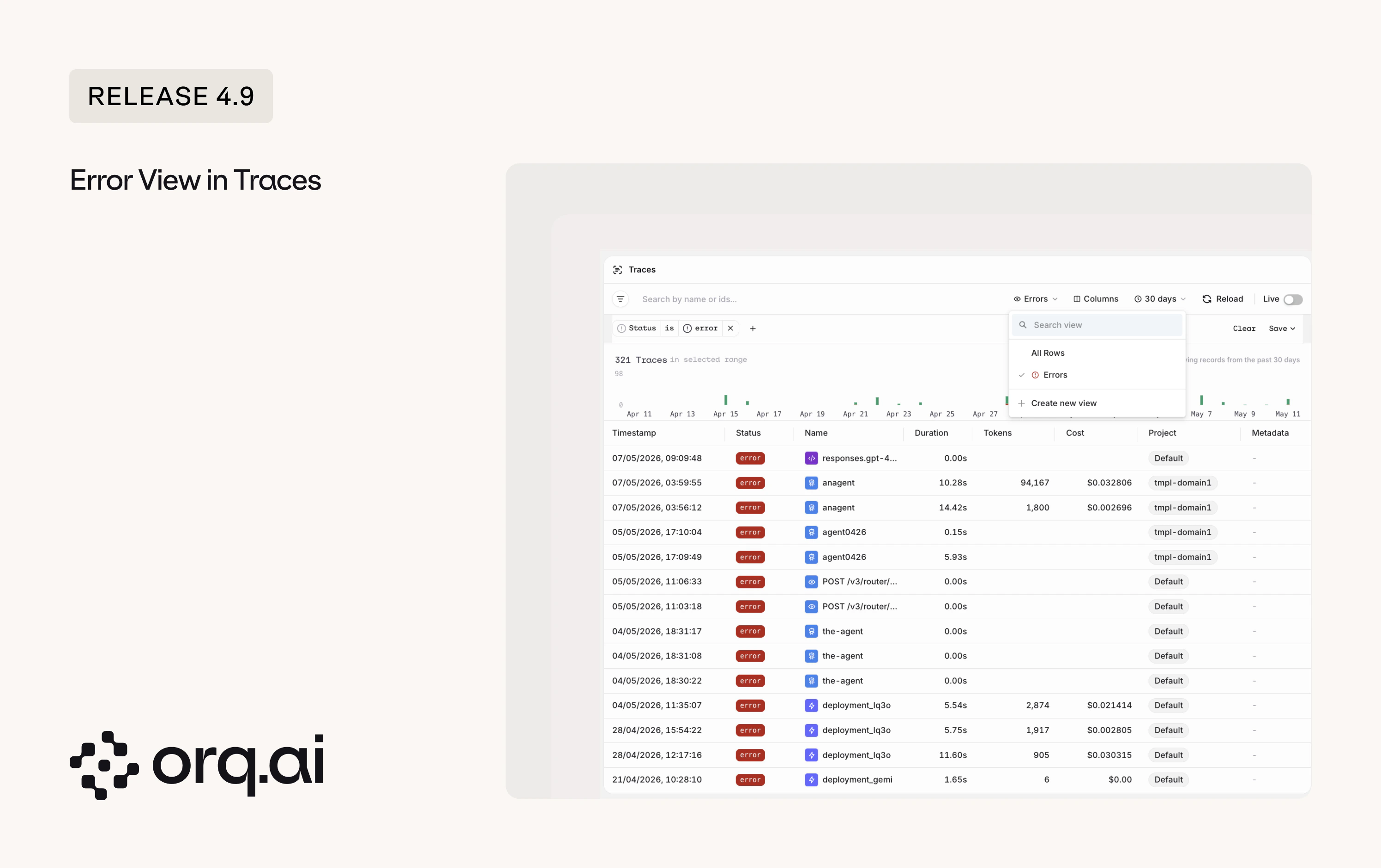

A new Errors view in Traces surfaces every failing request across systems in one place. Filter by Agent to triage a single Agent’s errors, combine the error filter with an Identity to see issues a specific client is hitting, and save the result as a custom view.

- Errors across systems: Every failing request surfaced in one view, across providers and Agents.

- Scope to an Agent or Identity: Combine the error filter with an Agent or Identity to debug a specific Agent or client.

- Custom views with quick filter: Save filtered setups as custom views, and jump to errors from any view with the quick error filter.

- Open the Errors view: Click the All Rows view button in the top right of Traces and select Errors.

See Viewing errors in Traces for details.

- LangGraph graph view: See the LangGraph agent graph alongside its trace.

- LangChain and LangGraph callbacks: A new

@orq-ai/langchain-jscallback adds tracing for Node and TypeScript apps. The Python callback now supports global registration and async, so you wire it up once instead of per invocation. - Azure Foundry Model Onboarding Improved: Connect every model in an Azure Foundry deployment in one step.

- OpenAI data residency: Pin OpenAI traffic to a specific geography for compliance.

- DeepSeek V4 thinking and caching: Use DeepSeek V4 with reasoning and prompt caching.

- Faster image generation: The AI Router no longer waits for the generated image to finish uploading to storage before returning the response.

- Observe onboarding snippets: Copy-paste tracing snippets in the Observe onboarding flow, matching the Router experience.

- Team of Agents section: Multi-agent orchestration moves out of the tool picker into its own section in Agent Studio.

- Filter traces by identity:

list_tracesaccepts anidentity_idfilter.

New additions to the Model Garden across Alibaba, AWS Bedrock, Google Vertex, Google AI, Mistral, and Tensorix. Browse details on the Supported Models page.

| Provider | Models |

|---|---|

| Alibaba | deepseek_v4_flash, deepseek_v4_pro, kimi_k2_5, qwen_mt_flash, qwen_mt_lite, qwen_plus_2025_12_01, qwen3_5_27b, qwen3_5_35b_a3b, qwen3_5_122b_a10b, qwen3_5_flash_2026_02_23, qwen3_6_27b, qwen3_6_35b_a3b, qwen3_6_flash, qwen3_6_plus, qwen3_6_plus_2026_04_02, qwen3_vl_flash_2025_10_15, qwen3_vl_plus_2025_12_19 |

| AWS Bedrock | amazon_nova_premier_v10, qwen_qwen3_coder_next |

| Google Vertex | deepseek_ai_deepseek_v3_2_maas, gemini-3.1-flash-lite, moonshotai_kimi_k2_thinking_maas |

| Google AI | gemini-3.1-flash-lite, gemma_4_26b_a4b_it, gemma_4_31b_it |

| Mistral | mistral_medium_2604 |

| Tensorix | nemotron_3_super_120b, qwen3_5_9b, qwen3_5_122b_a10b |

- Anthropic /v1/messages bridge: Anthropic-format

/v1/messagesrequests now route accurately to more providers through the AI Router. - Moderations endpoint: Run content moderation on text through the AI Router to classify against safety categories and get scores for harmful content detection.

- Trace filter by Evaluator: Filtering Traces by Evaluator returns matches.

- Duplicate traces: When a request was instrumented both through a client framework (such as an OpenTelemetry SDK or a LangChain callback) and the AI Router, it produced two trace rows. Requests now resolve to a single trace with parent-span relationships intact.

- Annotations on traces: Annotations render correctly on traces from the new pipeline and propagate to datasets and review queues.

- Gemini streaming with function tools: Token-by-token streaming works on Gemini tool calls.

- Gemini multimodal inputs: Audio and HTTPS image URLs sent to Gemini reach the model instead of being dropped.

- OpenAI file uploads: Filenames preserved through chat completions.

- Router policies and guardrails: Enable toggles stay in sync across tables, expressions clear cleanly, duplicate names are caught at entry, and policy limits update without errors.

- Pydantic AI onboarding snippet: Snippet runs out of the box.

- Agent secret redaction: Secrets used in Agent instructions and stored values are redacted before being saved.