Use the following steps to add your LiteLLM integration to the AI Studio and import your existing models to the AI Router.Documentation Index

Fetch the complete documentation index at: https://docs.orq.ai/llms.txt

Use this file to discover all available pages before exploring further.

Setup your LiteLLM Instance

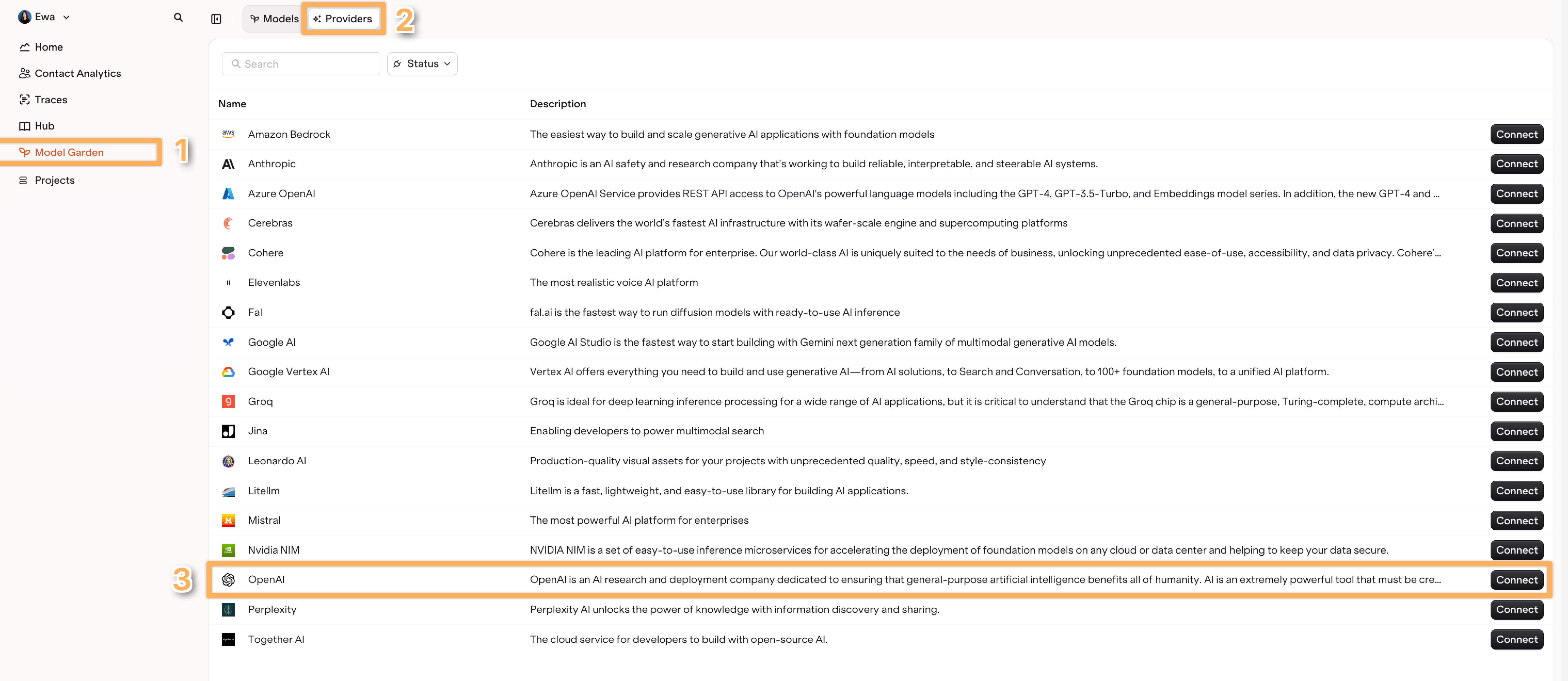

- Open the AI Router page within the AI Studio.

-

Find the Providers tab and select LiteLLM.

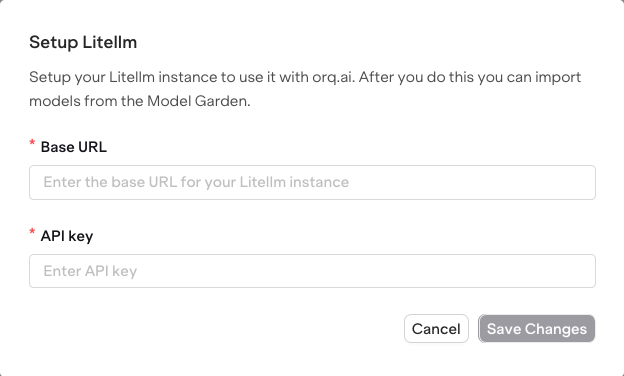

-

Choose Setup LiteLLM instance.

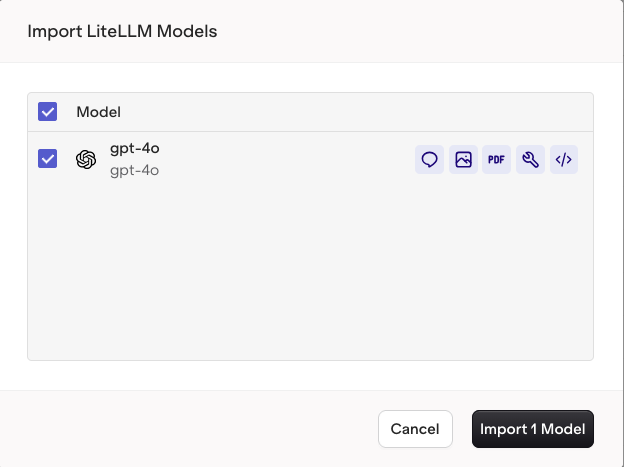

Import Models

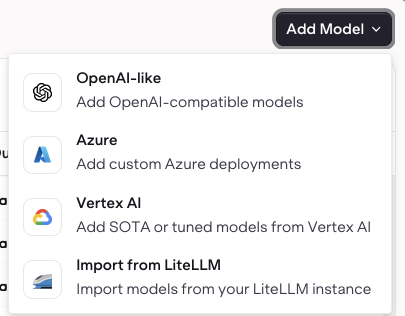

- Switch to the Models tab in the AI Router.

- Select Add Models and choose Import from LiteLLM

- Then select the models from the list of models imported from your LiteLLM provider.