Google Vertex AI provides enterprise-grade access to Gemini models with enhanced security, compliance, and control. By connecting Vertex AI to Orq.ai, you get enterprise Gemini capabilities with service account authentication, project-level billing, and data residency controls.Documentation Index

Fetch the complete documentation index at: https://docs.orq.ai/llms.txt

Use this file to discover all available pages before exploring further.

Setup Your API Key

To use Vertex AI with Orq.ai, you need to create a service account with appropriate permissions:Create Service Account

- Go to Google Cloud Console

- Navigate to IAM & Admin > Service Accounts

- Click Create Service Account

- Enter a name (e.g., “orq-vertex-ai”)

- Grant the following roles:

- Service Account Token Creator

- Vertex AI User

- Click Create and Continue

- Click Done

Create Service Account Key

- Find your service account in the list

- Click the Actions menu (three dots)

- Select Manage Keys

- Click Add Key > Create New Key

- Select JSON format

- Click Create to download the key file

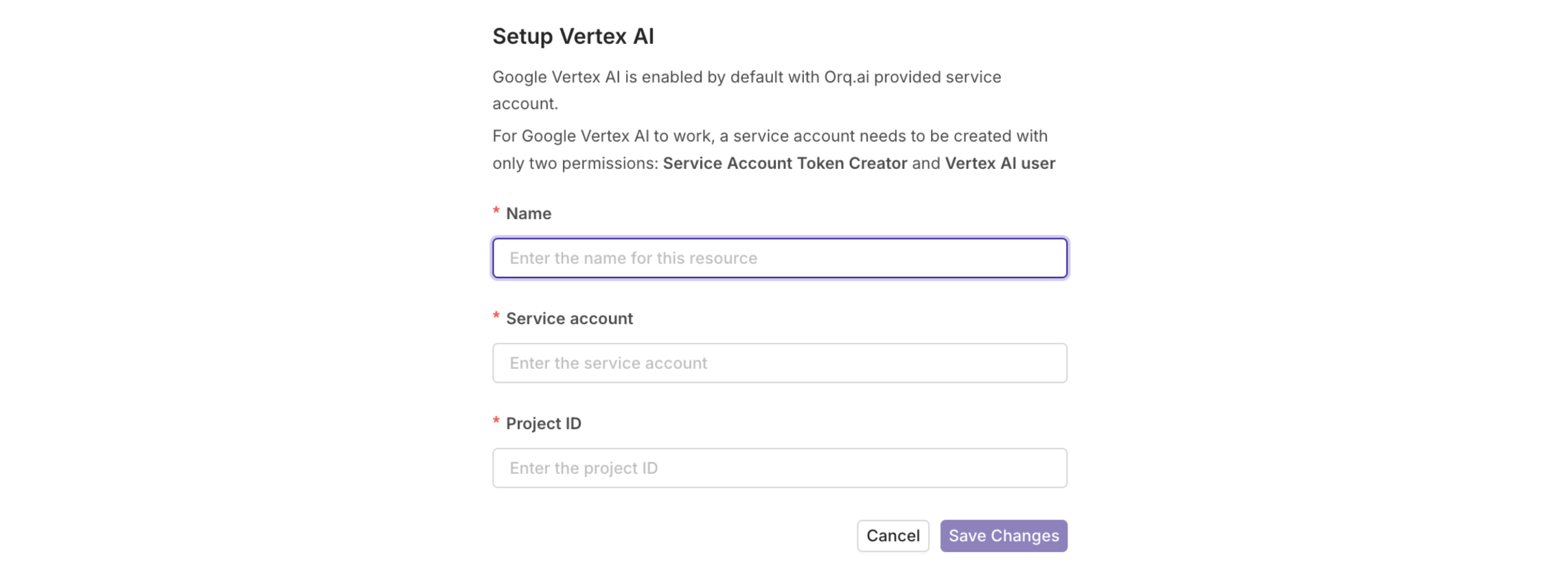

Configure in Orq.ai

- Navigate to AI Router > Providers

- Find Google Vertex AI in the list

- Click the Configure button

- Select Setup your own API Key

- Enter configuration name (e.g., “Vertex AI Production”)

- Paste your service account JSON in the Deployment JSON field (see format below)

- Click Save to complete the setup

Deployment JSON Format

Your deployment JSON should include the service account credentials, project ID, and region:Project ID: Find your Google Cloud Project ID at the top of the Google Cloud Console.Location: Common regions include

us-central1, europe-west1, asia-northeast1. Choose based on your data residency requirements.Available Models

The AI Router supports all current Vertex AI Gemini models. Here are the most commonly used:Recommended Models

| Model | Context | Best For |

|---|---|---|

google/gemini-3-pro-preview | 1M | Latest preview, most advanced |

google/gemini-2.5-pro | 1M | Latest stable, most capable |

google/gemini-2.5-flash | 1M | Fast, balanced performance |

google/gemini-2.0-flash-001 | 1M | Stable, reliable |

Quick Start

Access Vertex AI Gemini models through the AI Router.Using the AI Router

Access Vertex AI Gemini models through the AI Router with enterprise-grade security, advanced chat completions, streaming, and intelligent model routing. All Vertex AI models are available with consistent formatting and automatic request logging.Vertex AI models use the provider slug format:

google/model-name. For example: google/gemini-2.5-proPrerequisites

Before making requests to the AI Router, you need to configure your environment and install the SDKs if you choose to use them. Endpoint- Go to API Keys

- Click Create API Key and copy it

- Store it in your environment as

ORQ_API_KEY

Chat Completions

Send messages to Vertex AI Gemini models and get intelligent responses:Streaming

Stream responses for real-time output and improved user experience:Function Calling

Vertex AI Gemini models support function calling for structured interactions:Automatic Request Logging

All requests made through the AI Router are automatically logged to your dashboard. You can view:- Request details: Model used, tokens, latency

- Cost tracking: Per-request and aggregate costs

- Error monitoring: Failed requests with error messages

- Performance metrics: Response times and throughput