Shadow AI refers to the use of AI tools, models, and services by employees or teams without the knowledge, approval, or oversight of IT, security, or platform teams. It is the AI-era evolution of shadow IT, and carries significantly higher risk: unlike unauthorized SaaS adoption, shadow AI actively processes, retains, and may train on sensitive enterprise data. Gartner estimates that more than 40% of enterprises will experience a security or compliance incident linked to unauthorized AI usage by 2030.[1]Documentation Index

Fetch the complete documentation index at: https://docs.orq.ai/llms.txt

Use this file to discover all available pages before exploring further.

Common shadow AI patterns

Shadow AI rarely starts as a deliberate policy violation. Most instances begin as productivity shortcuts:- Personal API keys: Developers calling providers directly with personal accounts, outside approved procurement, budget controls, and data agreements.

- Code assistants and IDE integrations: Tools like GitHub Copilot, Cursor, and Windsurf send source code to external providers with no central procurement or visibility.

- Autonomous coding agents: Agentic tools that browse repositories, write and execute code, and call external APIs operate with broad access under developer credentials, with no organizational oversight.

- Unsanctioned tools: Teams in marketing, finance, and HR adopting AI apps or browser plugins without IT review.

- Internal apps calling unapproved models: Engineering teams building on providers or endpoints not covered by the organization’s vendor agreements.

- Free-tier consumer accounts: Employees using personal free-tier accounts where organizational controls and data processing terms do not apply.

Why shadow AI creates outsized risk

Data exposure

The top categories of data leaked to AI tools are source code (30%), legal content (22%), and merger and acquisition data (12%), according to Harmonic Security.[3]Compliance gaps

Unmanaged AI usage creates audit failures across major frameworks. Processing personal data through an unapproved tool may violate GDPR. Sending clinical data to an external model without a Business Associate Agreement violates HIPAA. Using a high-risk AI system without proper documentation creates liability under the EU AI Act. None of these failures surface until an incident or audit reveals them.Cost and budget sprawl

Multiple teams independently licensing the same AI capabilities, incurring uncapped API costs with personal keys, or paying for redundant tools compounds AI spend without visibility or accountability.Detection lag

IBM’s 2025 Cost of a Data Breach Report found an average 247-day detection window for shadow AI incidents, six days longer than standard data breach detection.[4] The longer unauthorized usage persists, the harder it is to assess scope or contain the exposure.Identifying shadow AI across the organization

Detection requires visibility across multiple layers:- Network traffic: Look for outbound connections to known AI API endpoints, unfamiliar providers, or model inference services not in the approved vendor list.

- Application and SaaS audits: Browser extension reviews, OAuth token inventories, and cloud access security broker (CASB) data surface what tools are actively in use.

- Usage anomalies: Sudden spikes in AI-related costs, new departments adopting AI without onboarding, or unusual request volumes from specific applications.

- Prompt and response activity: Logs that bypass approved infrastructure leave no internal trace. The absence of observability data for known AI-heavy workflows is itself a signal.

- Developer access patterns: Personal API keys, direct provider integrations in CI/CD pipelines, and hardcoded model endpoints in codebases are reliable indicators.

How the AI Router helps

The AI Router is a centralized, managed gateway for model access. Routing all AI traffic through a single approved endpoint makes unauthorized usage structurally easier to identify because sanctioned usage has a clear, observable path. What this enables:- Enforce approved model and provider lists. Requests to unsanctioned providers are not routed.

- Apply per-workspace or per-environment access controls so teams access only the models and capabilities appropriate to their context.

- Centralize API key management. Distribute workspace credentials to developers so teams operate under governed, revocable tokens rather than personal provider accounts.

- Capture all requests through a single audit surface. Anything that bypasses the router is, by definition, outside the governed path.

- Apply Policies at a global or project level to restrict how workspace credentials can be used: blocking PII processing, limiting which models a key can invoke, or constraining usage to an approved purpose.

- Enforce Guardrail Rules to apply content and compliance constraints on requests and responses in real time, without requiring changes to application code.

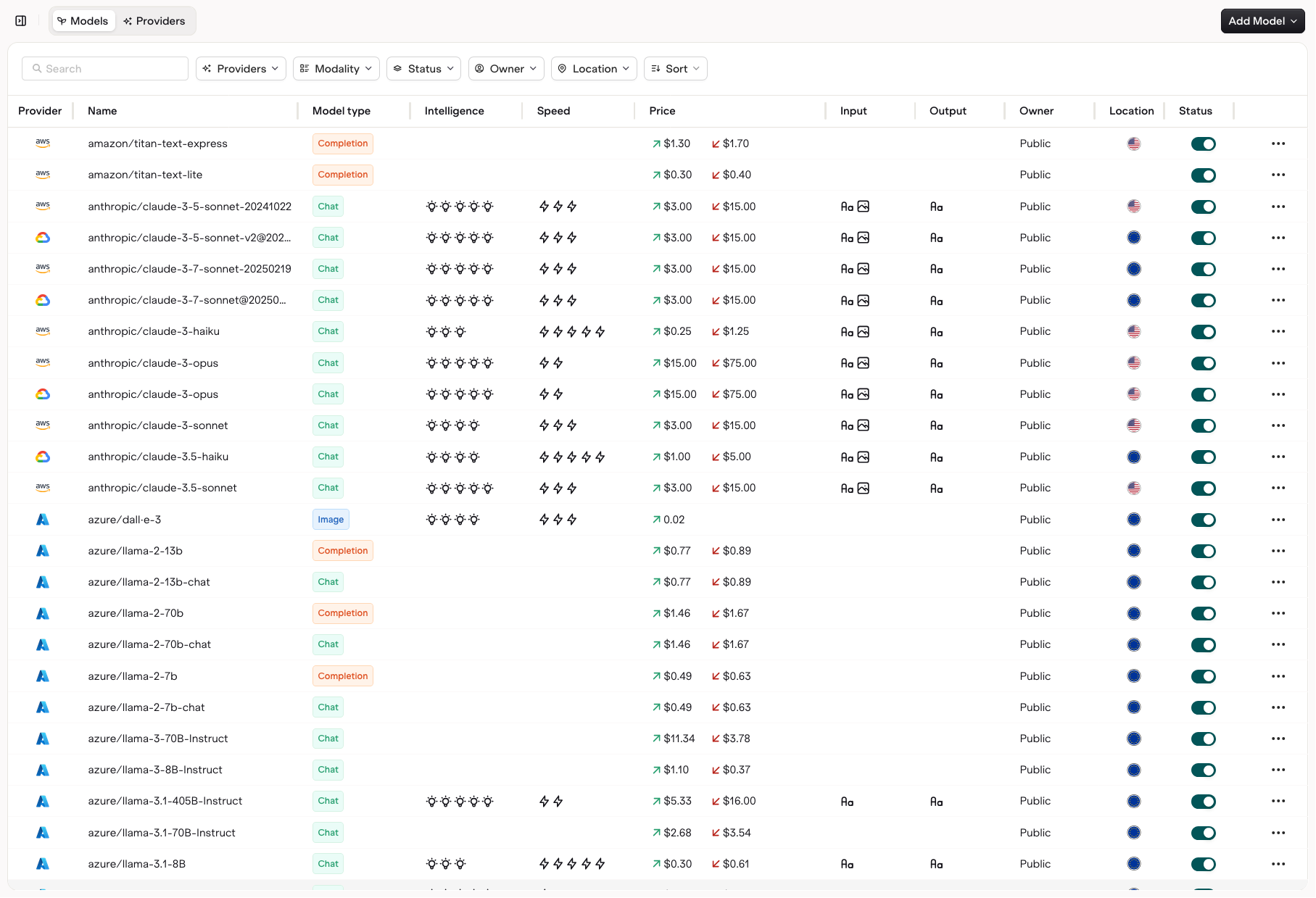

AI Router

Route all model traffic through a single managed endpoint.

Supported Models

View and filter approved models across all providers.

Router Policies

Apply access rules and usage constraints to workspace credentials at a global or project level.

Guardrail Rules

Enforce content and compliance rules on requests and responses in real time.

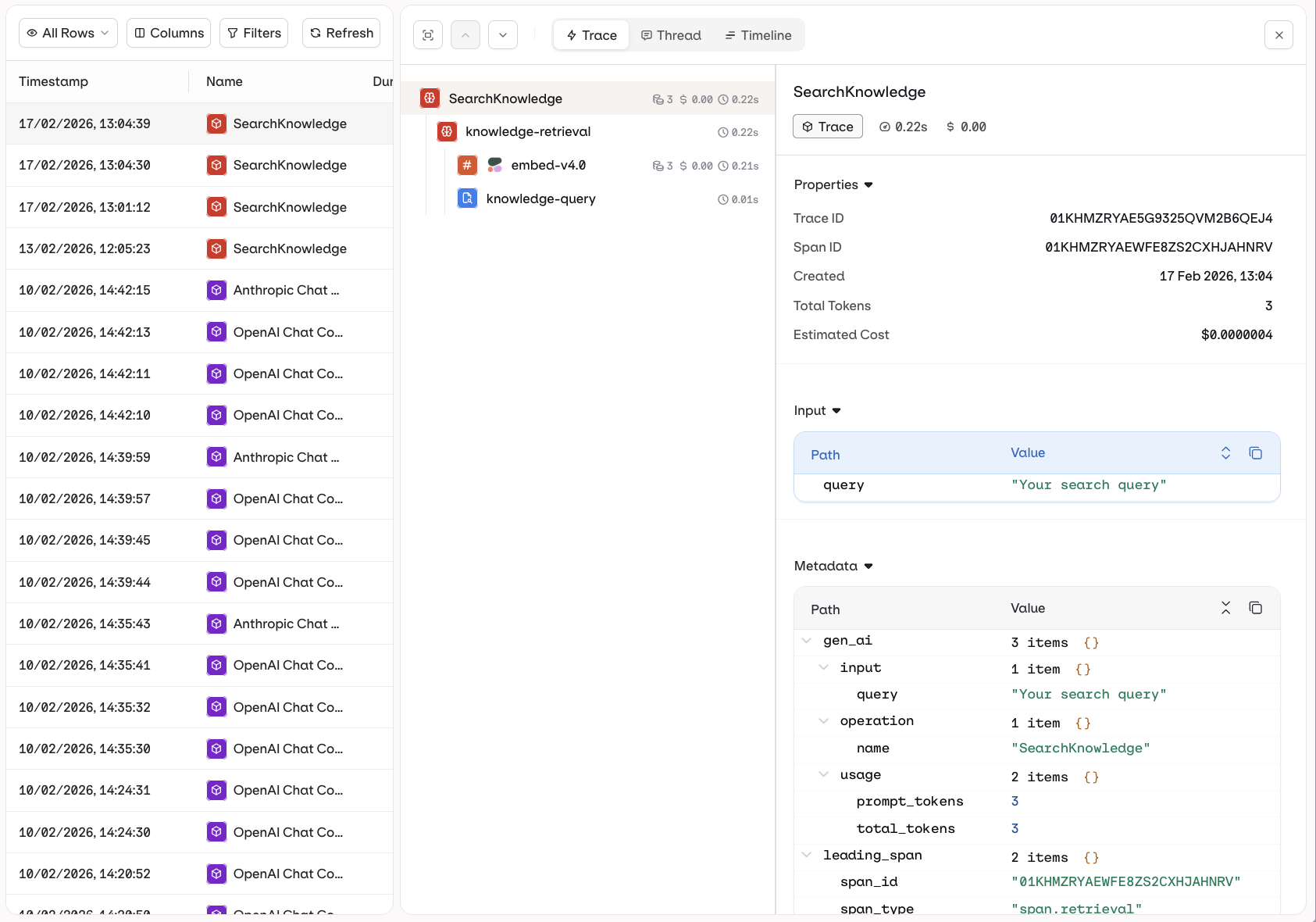

How Observability helps

Observability gives platform and security teams continuous visibility into what AI is doing across the organization. What this enables:- Monitor request volume, model usage, token consumption, and cost by workspace, team, or application.

- Surface which workflows, applications, or integrations are generating AI traffic.

- Investigate whether specific usage patterns are expected, compliant, and within approved scope.

- Establish a baseline for normal AI activity. Deviations from baseline are actionable signals, not noise.

- Provide audit-ready records of model inputs, outputs, and request metadata for compliance review.

Observability

Monitor model activity, usage trends, and request traces across teams.

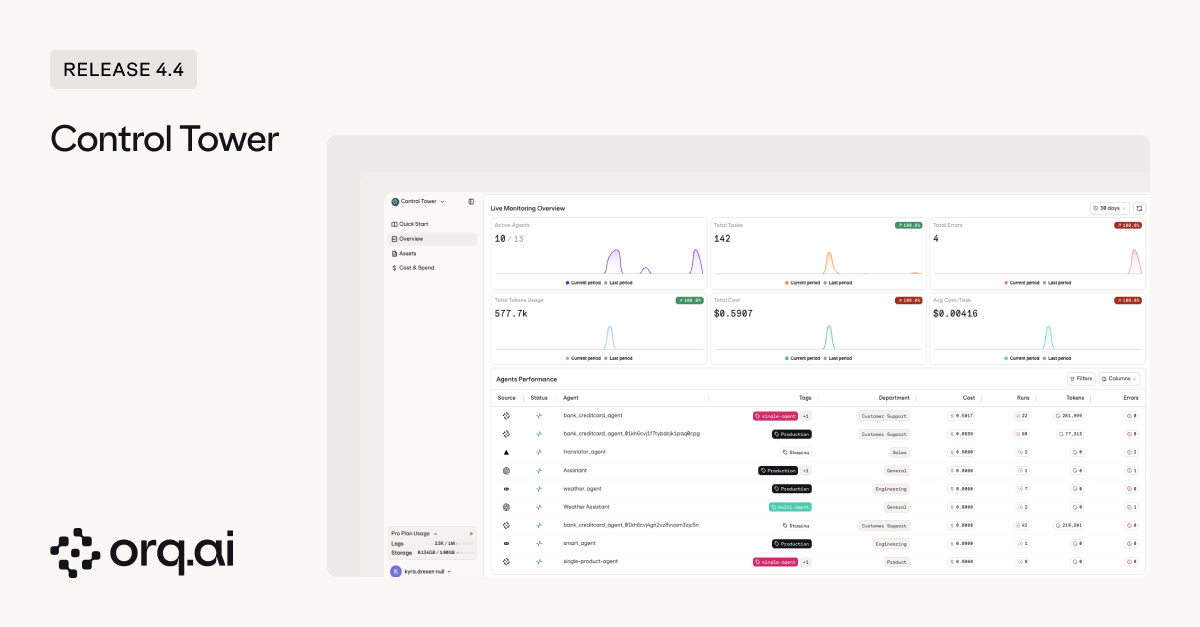

How Control Tower helps

Control Tower provides the governance layer that security, platform, and compliance teams need to operationalize AI oversight at scale. What this enables:- Define and enforce organizational policies for AI usage, including which models, providers, and use cases are approved.

- Give security and platform teams a single view of AI activity across products, teams, and environments.

- Detect out-of-character usage: sudden shifts in request volume, unexpected model selections, or activity from applications that fall outside known workflows are visible in real time.

- Run Evaluators at a low sample rate across active deployments to detect drift in prompt behavior, output quality, or compliance posture, catching gradual changes before they escalate.

- Detect, investigate, and respond to policy deviations before they escalate into incidents.

- Reduce shadow AI surface area by standardizing approved patterns and making the compliant route the default.

Control Tower

Governance and oversight for AI usage across the organization.