Documentation Index

Fetch the complete documentation index at: https://docs.orq.ai/llms.txt

Use this file to discover all available pages before exploring further.

What are Traces

With Traces, dive into the workflow of each model generation and understand the inner workings of an LLM call on orq.ai. Traces correspond to events within the generations. Events within a Deployment can be various:- Evaluators & Guardrails

- Retrieval using Knowledge Base

- Caching

- Retries and Fallbacks

Creating Traces

Traces are automatically generated when using Orq.ai Deployments. Create custom traces for application code using framework instrumentation or the Orq.ai SDK.Framework Instrumentation

For popular AI frameworks and libraries, use automatic instrumentation with OpenTelemetry:Framework Integrations

Explore automatic instrumentation for OpenAI, LangChain, LlamaIndex, CrewAI, Autogen, and 15+ other frameworks with OpenTelemetry integration.

Custom Tracing using the @traced decorator

For custom functions or application workflows, use the@traced decorator from the Python SDK. It works with both synchronous and async functions:

The

Capture or suppress inputs/outputs with

@traced decorator supports the following span types:| Type | Description |

|---|---|

agent | A high-level orchestration step or agent workflow |

embedding | An embedding generation operation |

function | A general-purpose function or processing step |

llm | A direct LLM API call |

retrieval | A retrieval or knowledge lookup operation |

tool | An external tool call |

capture_input and capture_output, and attach custom metadata with the attributes parameter:How to Lookup Traces

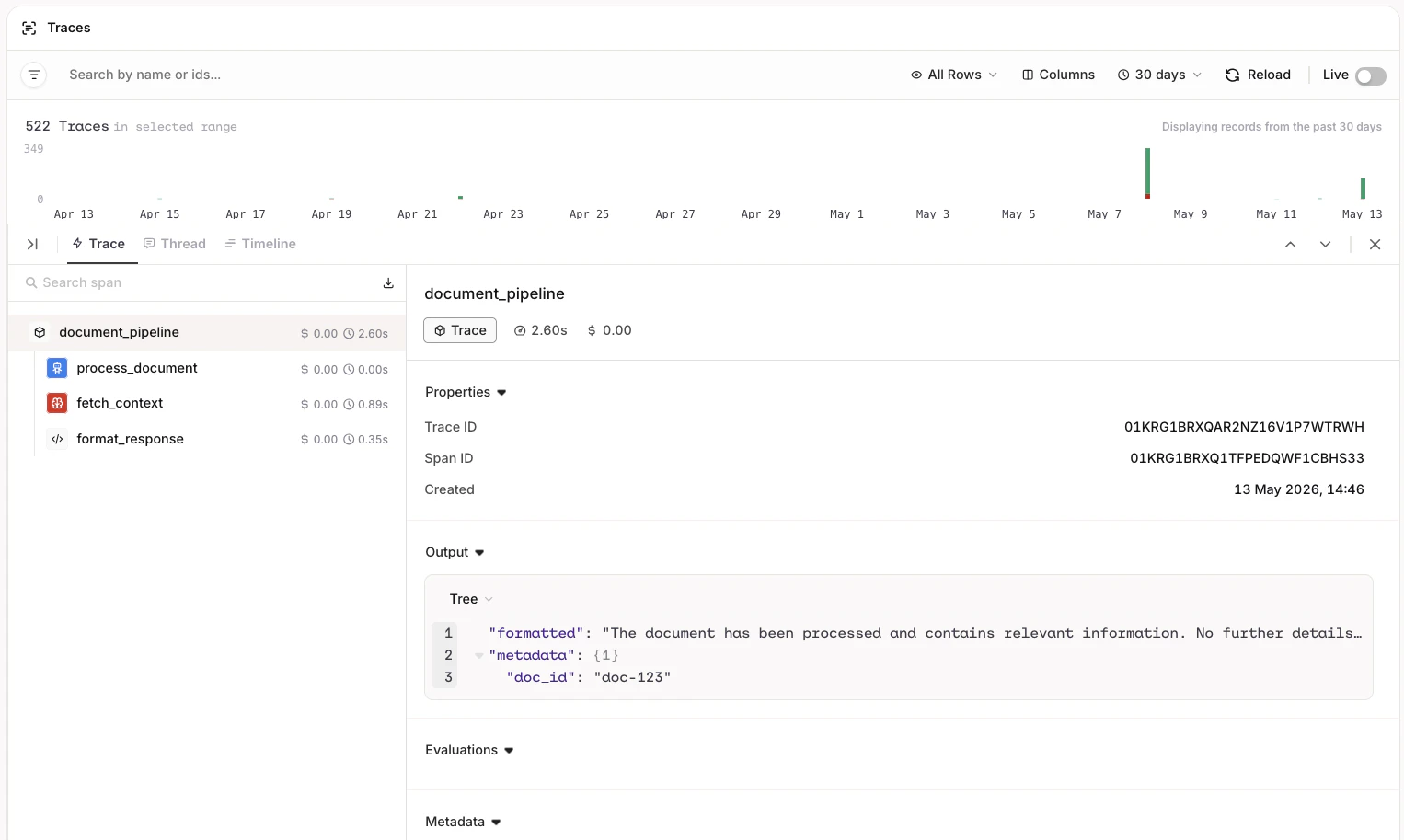

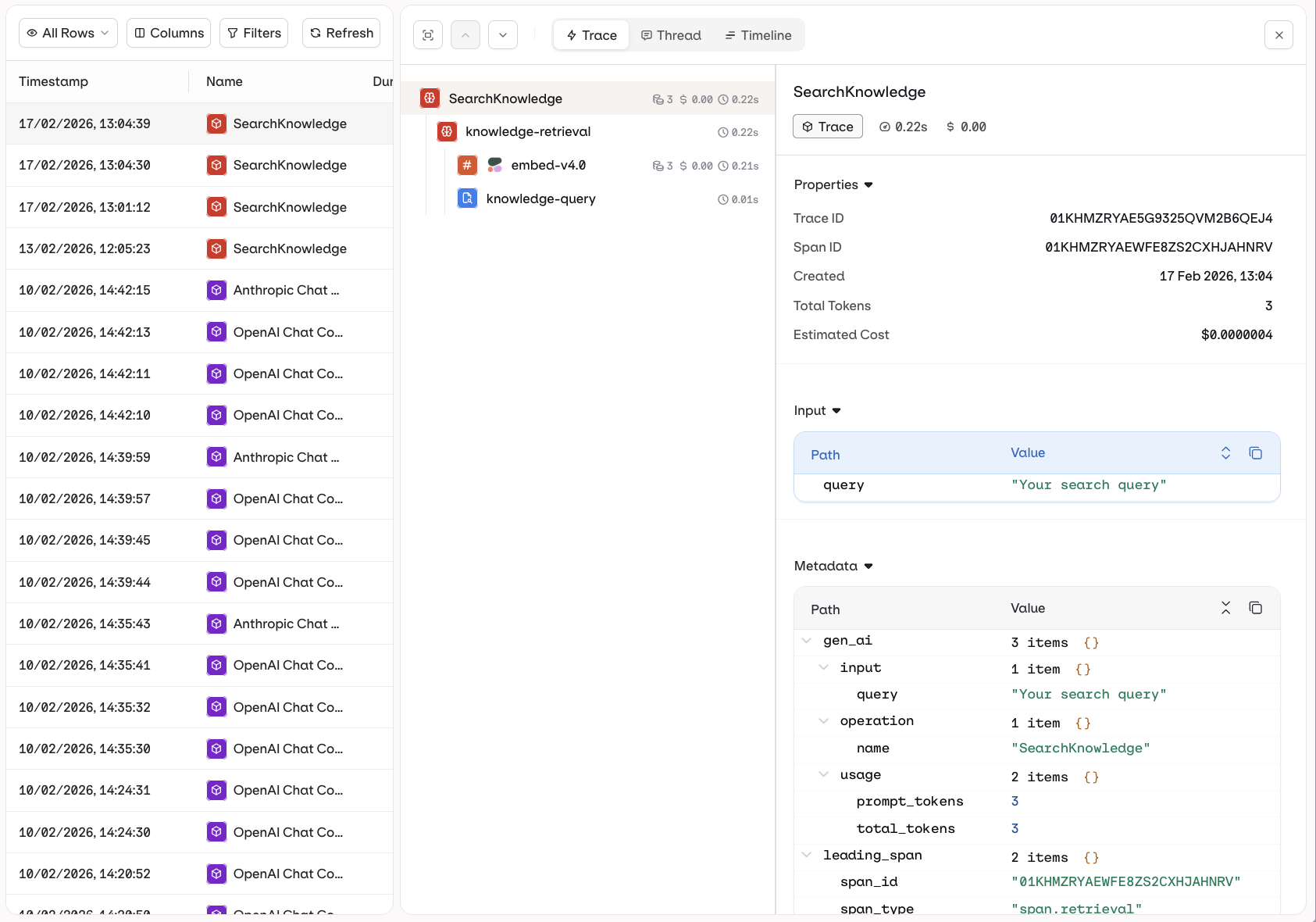

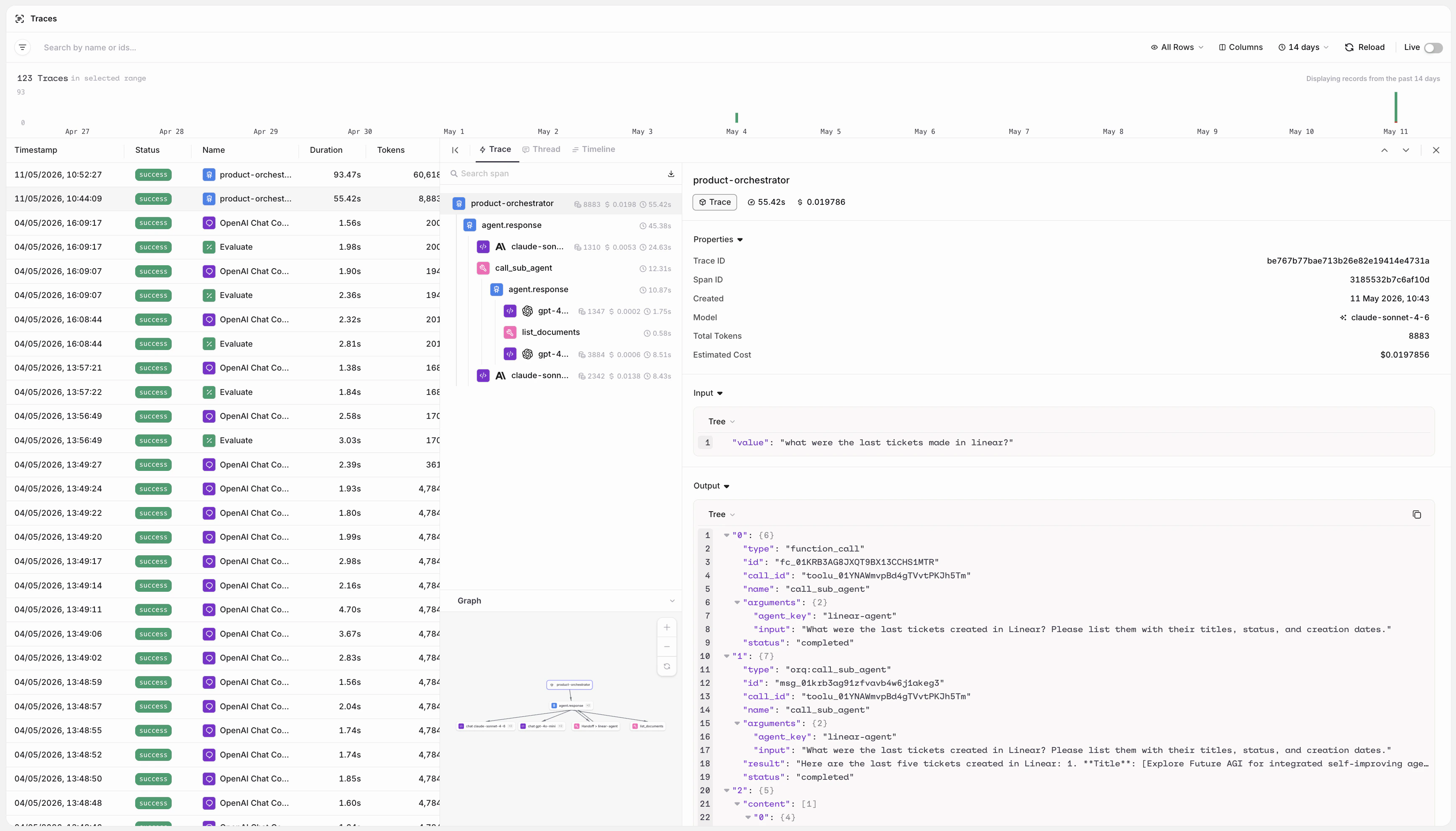

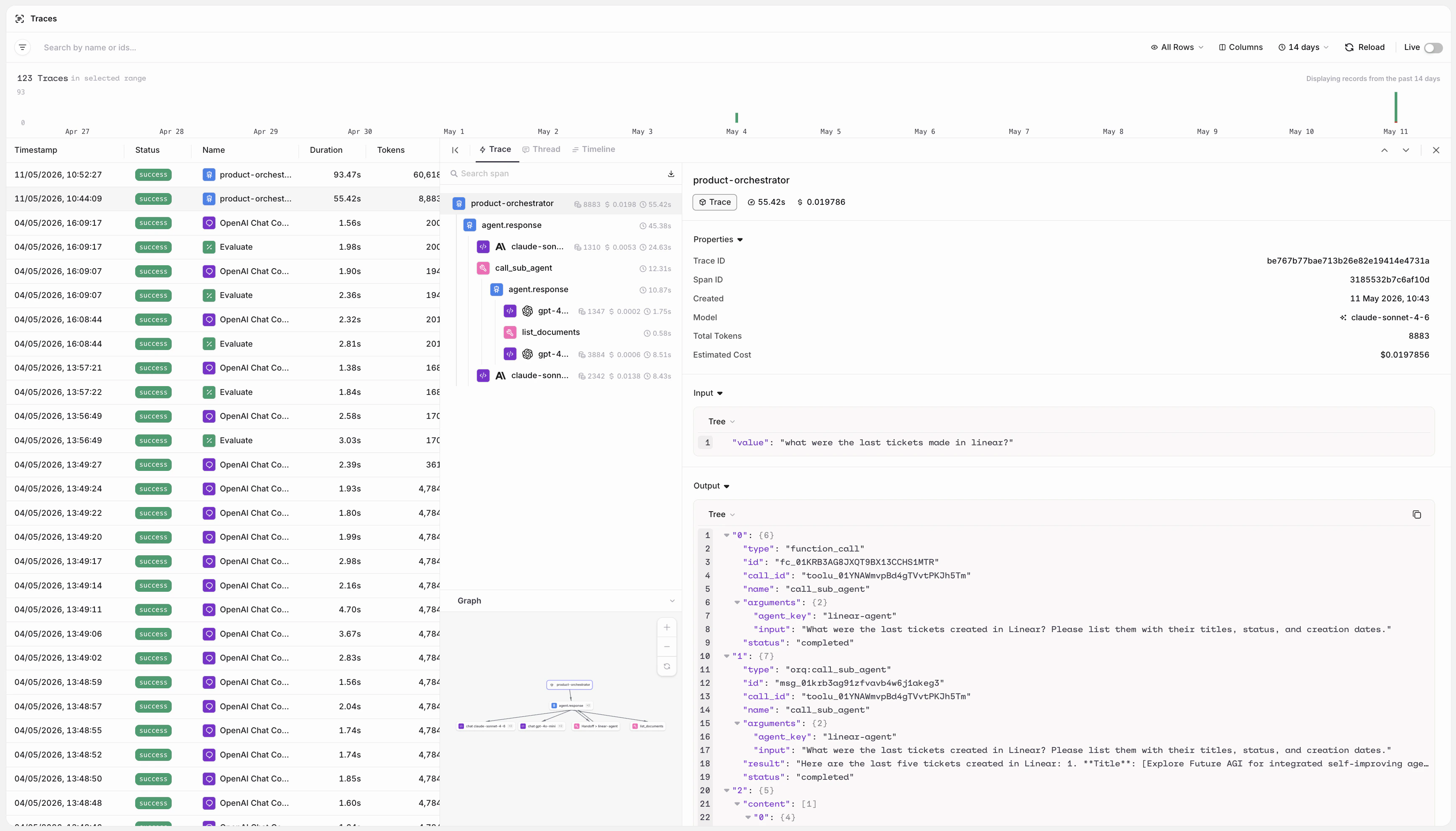

To find traces, head to the AI Studio and to the Traces section.

- The left column shows the list of Traces available.

- By selecting a trace, the middle column opens, showing the hierarchy of events shown in order of execution.

- By selecting a single trace, the right column opens, showing details for the specific step.

Trace Views

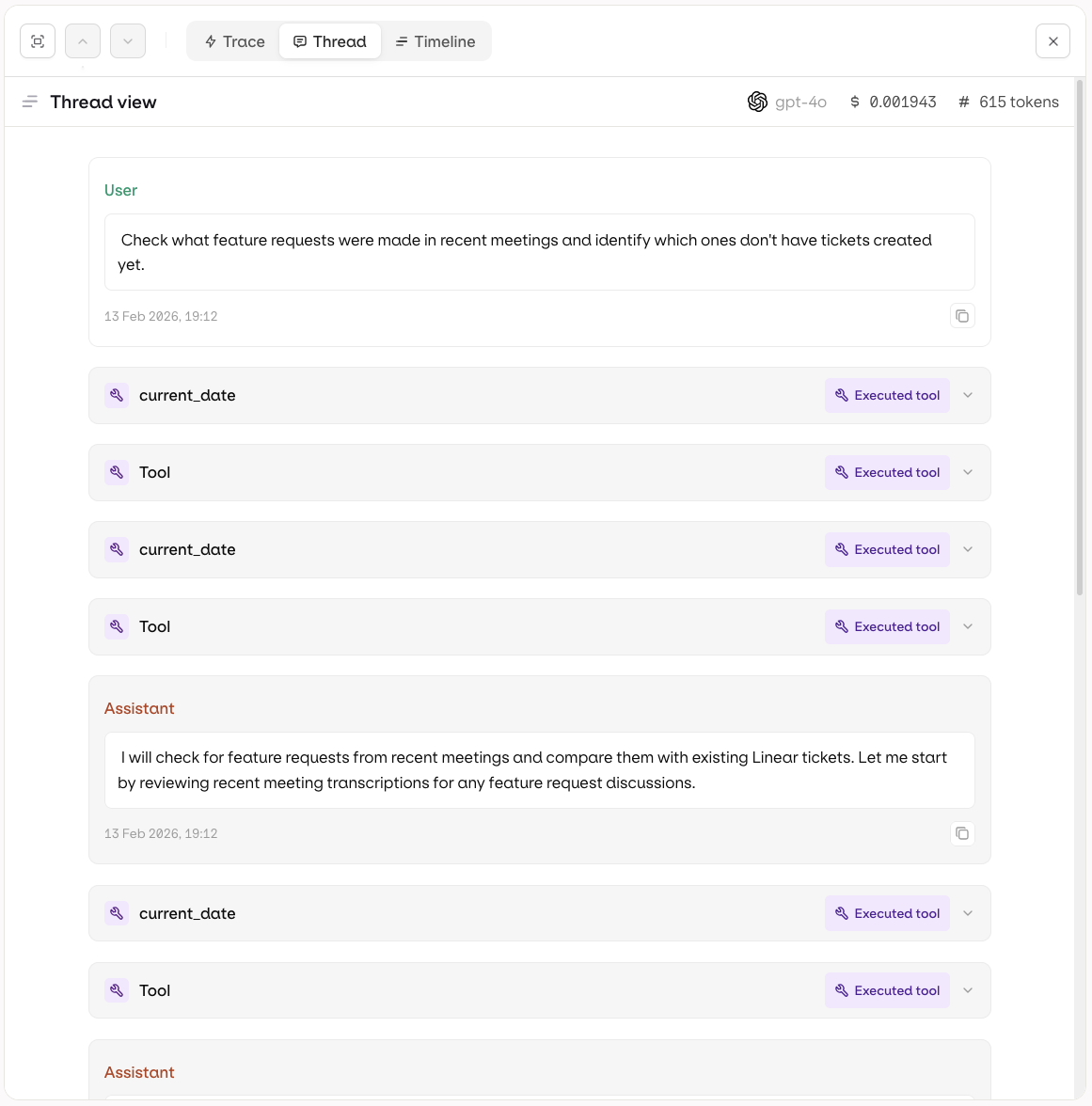

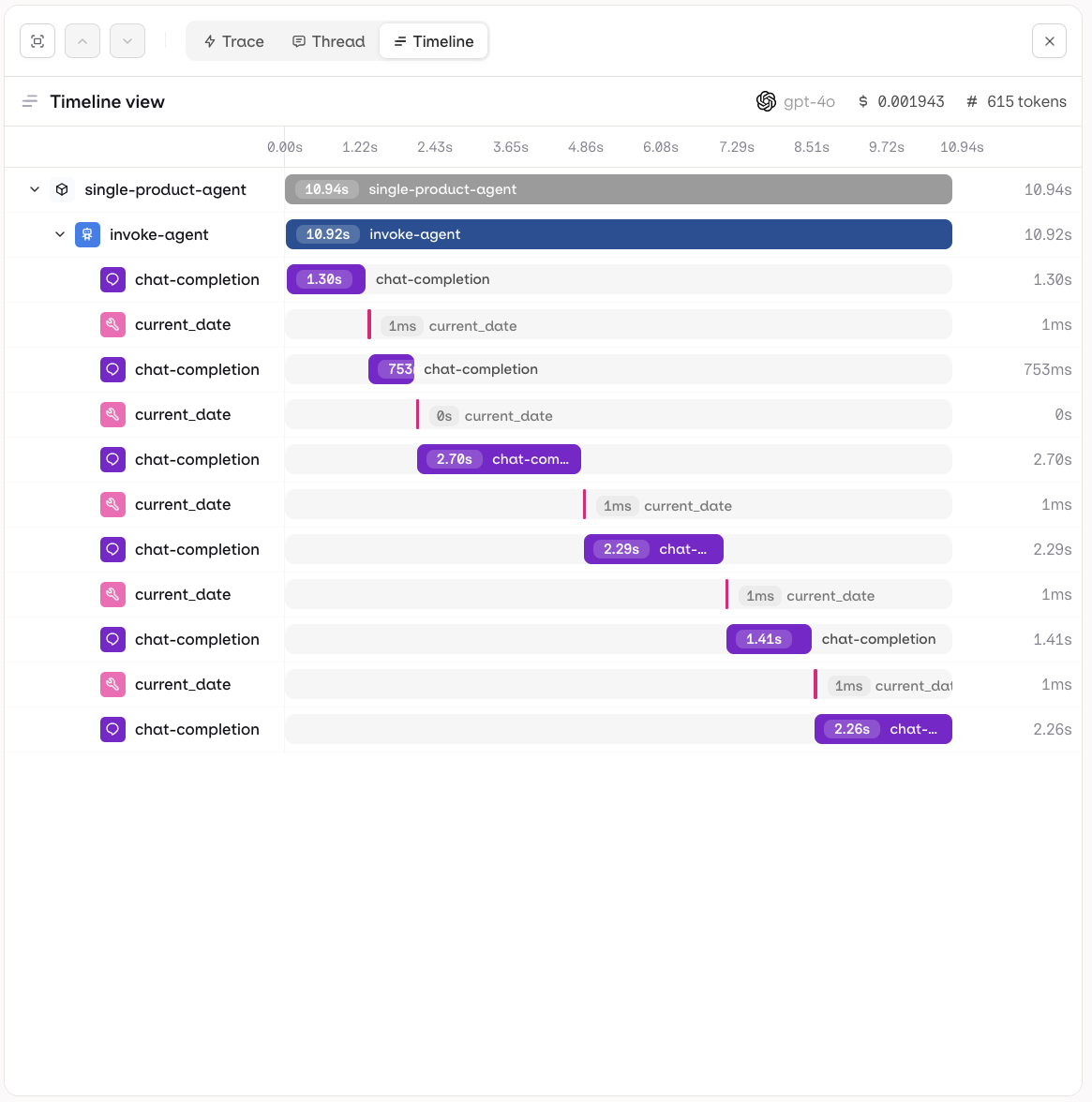

Each trace can be inspected in three views to suit different debugging and analysis needs.- Trace

- Thread

- Timeline

The Trace view shows the full execution tree for a single run. Each step is displayed hierarchically, including LLM calls, tool invocations, knowledge retrievals, and memory interactions. Use this view to inspect inputs, outputs, token usage, and latency at every step.For multi-agent runs, the hierarchy renders as an agent graph: parent agents and their sub-agent calls are shown as a nested tree, making it easy to follow how work was delegated across agents and where time was spent.

Manually evaluate responses using Human Reviews directly on individual spans. Human Reviews defined in the project are available on all spans automatically. Learn more in Human Review.

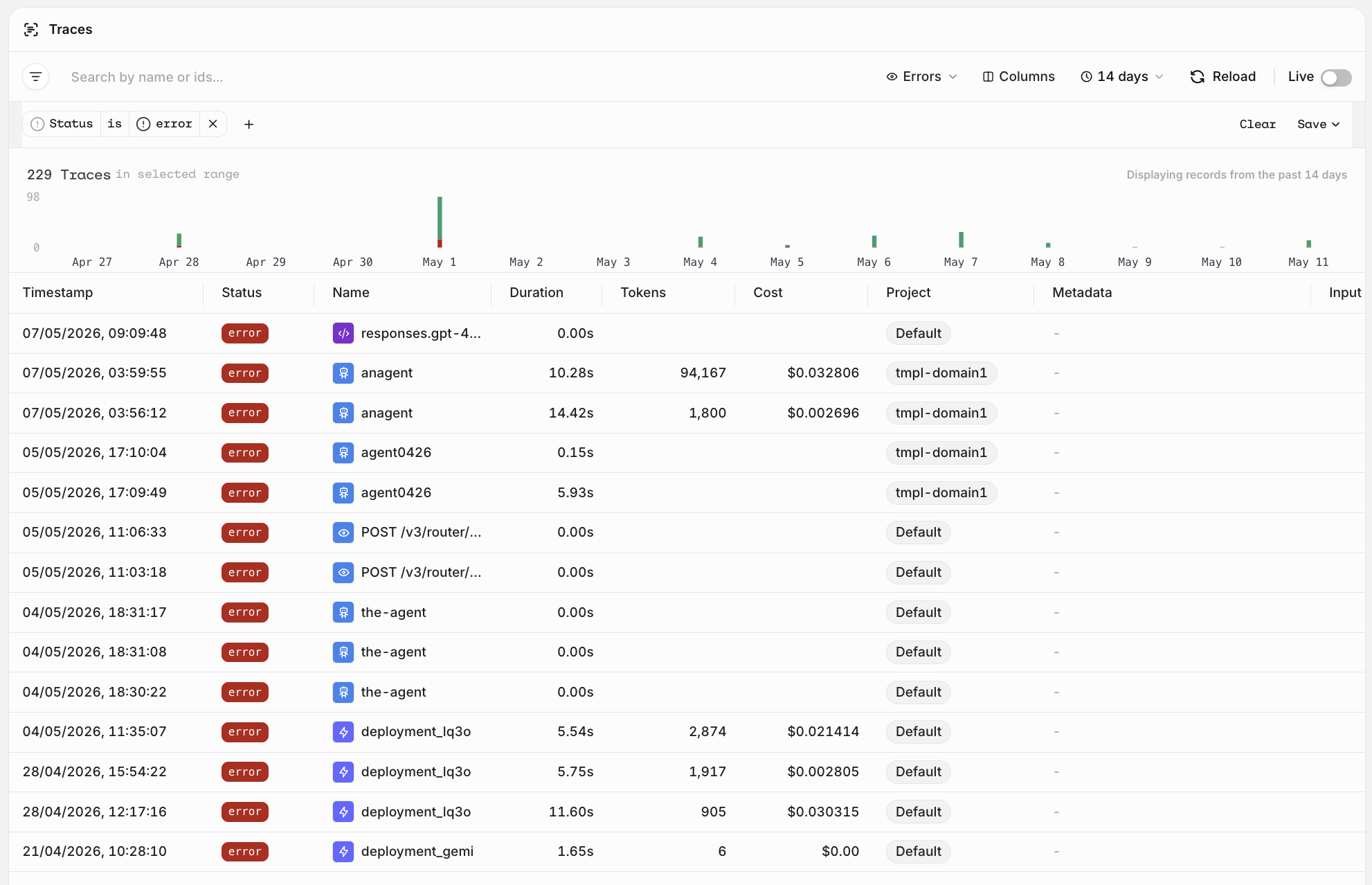

Viewing Errors

Traces that encountered an error are marked with a red error status badge in the list. Use the Status filter to scope the list to errors only and identify failing traces quickly.

- Filter by Status: error and an Identity to see all errors for a specific user.

- Combine with Project or Metadata to narrow down failures to a particular environment or deployment.

Filtering Traces

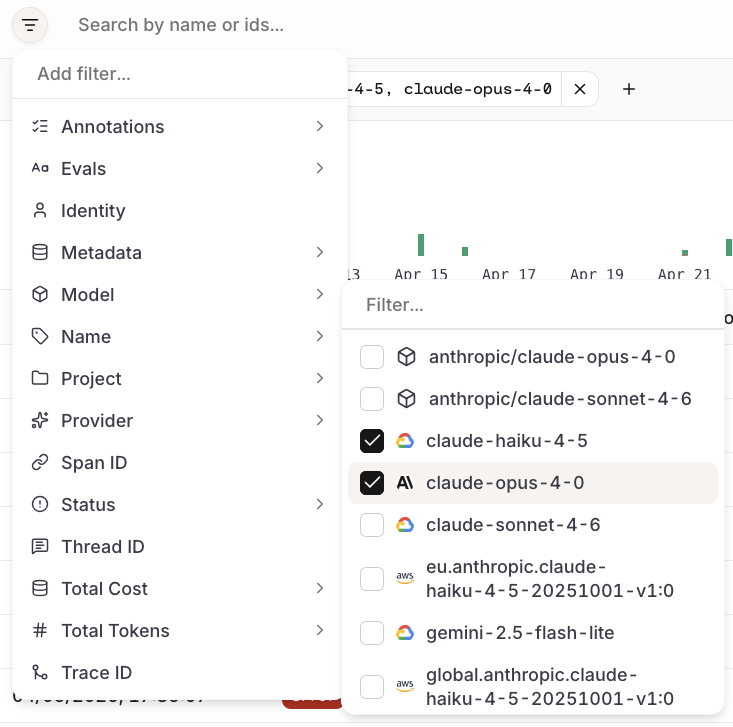

Click next to the search bar to open the filter menu. Select a category to expand a searchable list of values and check one or more to apply.

When working with Agents, access traces directly from the Agent page with automatic filtering for that specific agent.

| Filter | Description |

|---|---|

| Annotations | Filter by annotation labels applied to traces. |

| Evals | Filter by evaluator results attached to traces. |

| Identity | Filter by the identity that triggered the trace. |

| Metadata | Filter by metadata fields attached to traces. |

| Model | Filter by the model used in the trace. |

| Name | Filter by trace name. |

| Project | Filter by the project the trace belongs to. |

| Provider | Filter by the model provider. |

| Span ID | Filter by a specific span identifier. |

| Status | Filter by the status of the trace (e.g. error, success). |

| Thread ID | Filter by the thread the trace belongs to. |

| Total Cost | Filter by the total cost of the trace. |

| Total Tokens | Filter by the total token count of the trace. |

| Trace ID | Filter by a specific trace identifier. |

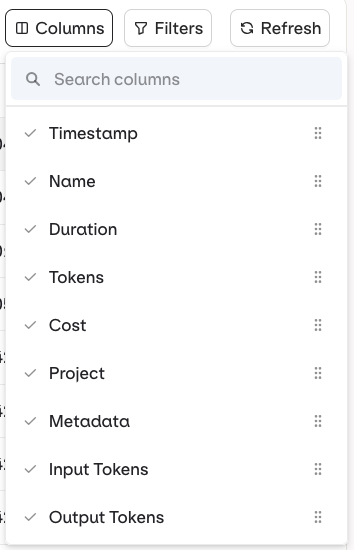

Configuring Column

Columns can be enabled and toggled to display more available data in the traces list.

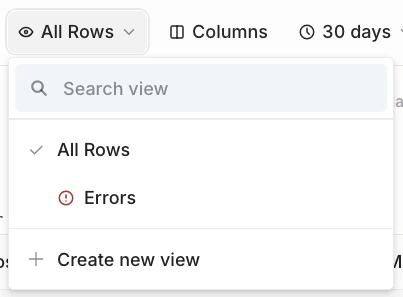

Creating Custom Views

Save frequently used filter combinations as reusable views:- Set the desired filters

- Click All Rows (top right)

- Select Create New View

- Enter a title for the view

- Optionally check Set view private (default is shared with project members)

- The filtered view is saved and accessible from the All Rows dropdown

Threads

Threads

Visualize conversation history as Threads to follow the full sequence of messages across an agent session.