MCP

Skills

Third-party inference (Cowork)

MCP

Claude Desktop is Anthropic’s desktop application that supports Model Context Protocol (MCP) integrations. By configuring the Orq MCP server, you can access all Orq.ai features directly in your Claude Desktop conversations.Prerequisites

- Claude Desktop app installed

- Active Orq.ai account

- Orq.ai API key

- Node.js installed (required for

npx mcp-remote)

Installation

You can configure the Orq MCP server through Claude Desktop Settings or using the Terminal.Option 1: Settings Path (Recommended)

Option 1: Settings Path (Recommended)

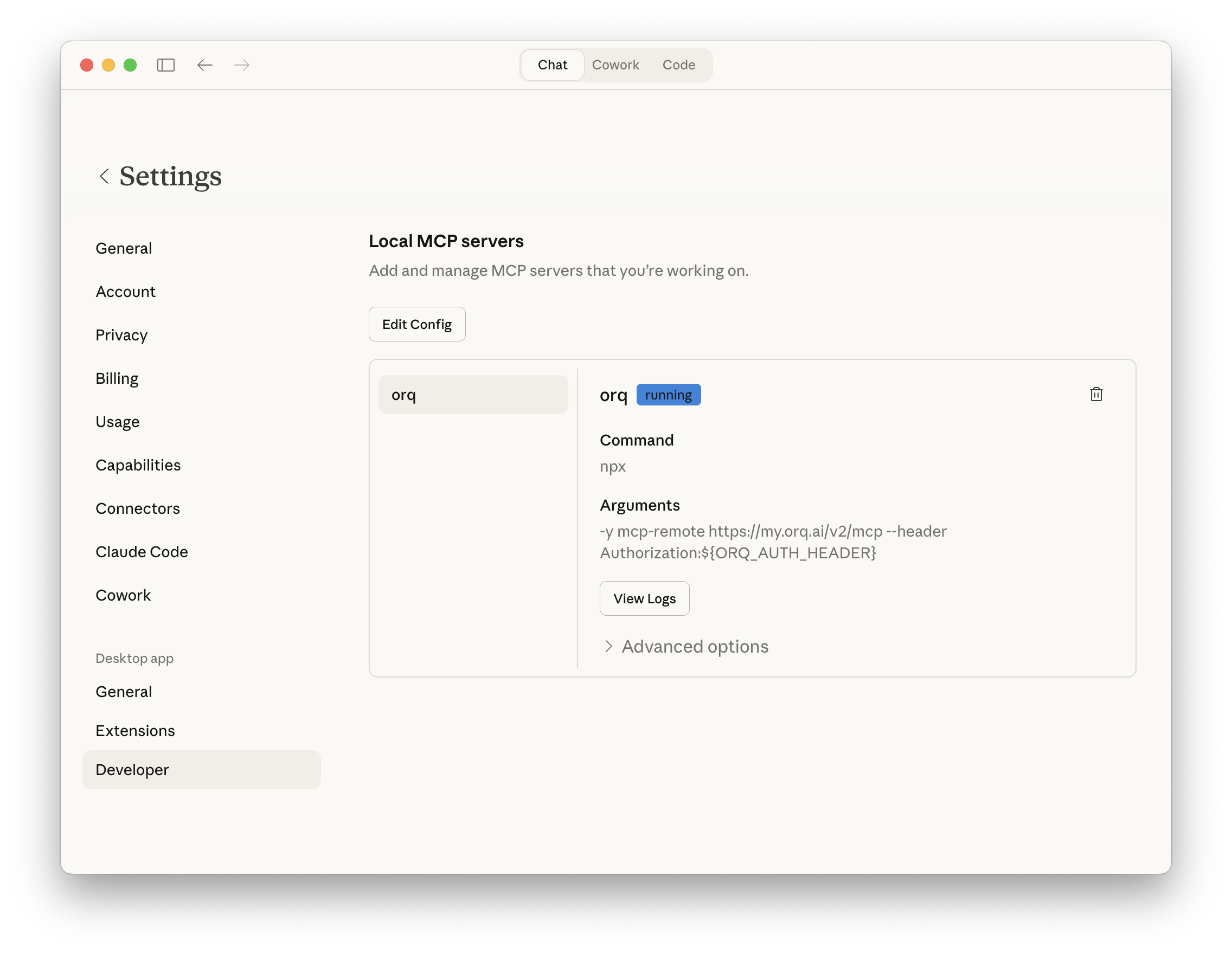

- Open Claude Desktop Settings by clicking Claude in the top-left menu, then select Settings

- Click Developer in the sidebar

- Click Edit Config to open the

claude_desktop_config.jsonfile - Paste the following configuration into the file:

- Replace

<ORQ_API_TOKEN>with your actual API key from Workspace Settings → API Keys - Save the file and restart Claude Desktop

orq entry to the existing mcpServers object. Do not overwrite the preferences block or any other existing keys.Option 2: Terminal Path

Option 2: Terminal Path

<ORQ_API_TOKEN> with your actual API key, save the file, and restart Claude Desktop.Verify Installation

After restarting Claude Desktop, start a new conversation and ask:

What You Can Do

Once connected, you can use natural language in Claude Desktop to perform these operations:Agents

Agents

Create an agent with custom instructions and toolsGet agent configuration for [agent-key]Update agent [agent-key] with new instructions or modelConfigure agent with evaluators and guardrails

Analytics

Analytics

Get analytics overview for my workspaceShow me workspace metrics for the last 7 daysQuery analytics filtered by deployment ID

Datasets

Datasets

Create a dataset called "customer-queries"List all datapoints in dataset [dataset-key]Add datapoints to dataset [dataset-key]Update datapoint [datapoint-id]Delete specific datapoints in dataset [dataset-key]Delete dataset [dataset-key]

Experiments

Experiments

Create an experiment from dataset [dataset-key]List all experiment runsExport experiment run [run-id] as CSVRun experiment and auto-evaluate results

Evaluators

Evaluators

Get evaluator configuration for [evaluator-key]Create an LLM-as-a-Judge evaluator for toneCreate a Python evaluator to check response lengthAdd evaluator to experiment [experiment-key]Update evaluator [evaluator-key] with a new promptUpdate Python evaluator [evaluator-key] with revised code

Traces

Traces

List traces from the last 24 hoursShow me traces with errorsGet span details for trace [trace-id]Find the slowest traces from todayShow all traces for thread [thread-id]

Models

Models

List all available chat modelsList all available embedding models

Registry

Registry

List registry keys for filtering tracesList top values for [attribute-key]

Search

Search

Search for datasets named "customer"Find experiments in project [project-id]List directories in project [project-id]

Documentation

Documentation

Search the Orq.ai docs for [topic]

Managing Entities

Managing Entities

Delete agent [agent-key]Delete experiment [experiment-key]Delete evaluator [evaluator-key]Delete prompt [prompt-key]Delete knowledge base [knowledge-base-key]

delete_dataset to delete a dataset along with all its datapoints.Usage Examples

Create Experiments

- Use

search_entitiesto find the “customer-queries” dataset - Use

create_experimentwith the name “Model Comparison Test” and auto-run enabled - Configure two task columns (one for GPT-5.2, one for Claude Sonnet 4.6)

- Execute both models against the dataset automatically via the auto-run option

- Provide a summary of the results with evaluation metrics

Analyze Traces

- Calculate the time range for the last 24 hours

- Use

list_traceswith error status filter - Analyze the trace data

- Provide error count and types, affected deployments, time distribution, and suggested fixes based on error patterns

Generate Synthetic Datasets

- Generate 100 realistic customer support conversation examples (questions and expected responses)

- Use

create_datasetto create a new dataset named “Support Training” - Use

create_datapointsto add all 100 conversations to the dataset - Confirm creation with the dataset ID and sample of generated data

Performance Analysis

- Use

query_analyticswith a 7-day time range - Analyze average latency changes over the week

- Review token usage patterns and cost trends

- Examine error rate fluctuations

- Compare performance across different models

- Provide a summary report with insights on whether performance has improved or decreased

Troubleshooting

MCP Server Not Connecting

MCP Server Not Connecting

- Verify the config file path is correct for your OS

- Check the JSON syntax is valid (no trailing commas, proper quotes)

- Ensure your API key is valid and has the required permissions

- Restart Claude Desktop after making config changes

- Check the Claude Desktop logs for error messages

Authentication Errors

Authentication Errors

- Confirm your API key is active in Orq.ai Settings

- Make sure the API key has workspace access permissions

- Verify the

Authorizationheader format:Bearer YOUR_KEY - Try generating a new API key if the current one is expired

Slow Responses

Slow Responses

- Be patient with large dataset operations

- Break complex workflows into smaller steps

- Check Orq.ai service status at status.orq.ai

Tool Not Found

Tool Not Found

- Verify the Orq MCP server is properly configured in your config file

- Restart Claude Desktop to reload the Orq MCP configuration

- Try rephrasing your request

- Check the MCP tools list

Additional Configuration

Multiple Workspaces

If you work with multiple Orq.ai workspaces, you can configure multiple MCP servers:Skills

Skills add pre-built agentic workflows to Claude for the full Build, Evaluate, Optimize lifecycle. See the Skills page for the full reference.Installation

Skills for Claude Desktop are managed through the Claude.ai web interface and automatically apply across all your Claude clients, including the desktop app.Available Skills

Once installed, Claude picks the right skill automatically based on what you describe.| Skill | Description |

|---|---|

| build-agent | Design, create, and configure an Orq.ai agent |

| build-evaluator | Create validated LLM-as-a-Judge evaluators |

| analyze-trace-failures | Read production traces and categorize failures |

| run-experiment | Create and run experiments with evaluation |

| generate-synthetic-dataset | Generate and curate evaluation datasets |

| optimize-prompt | Analyze and optimize system prompts |

| setup-observability | Instrument LLM applications with orq.ai tracing: AI Gateway for zero-code traces, or OpenTelemetry for framework-level spans |

| compare-agents | Run cross-framework agent comparisons using evaluatorq |

/orq:quickstart, /orq:traces, etc.) are only available in Claude Code. See Skills for details.Third-party inference (Cowork)

Claude Cowork’s third-party inference mode routes all model inference through a configured gateway instead of Anthropic’s first-party infrastructure. Orq.ai’s AI Gateway speaks the Anthropic Messages API and is fully compatible.EU data residency

Provider fallback

Cost control

Prerequisites

- Claude Desktop installed with a Pro, Max, Team, or Enterprise plan

- Active Orq.ai account with an API key

Setup

Enable Developer Mode

- Open Claude Desktop

- Click Help in the menu bar

- Hover over Troubleshooting

- Select Enable Developer Mode

- Restart Claude Desktop

Open Gateway Configuration

- Click Developer in the menu bar

- Select Configure Third-party inference

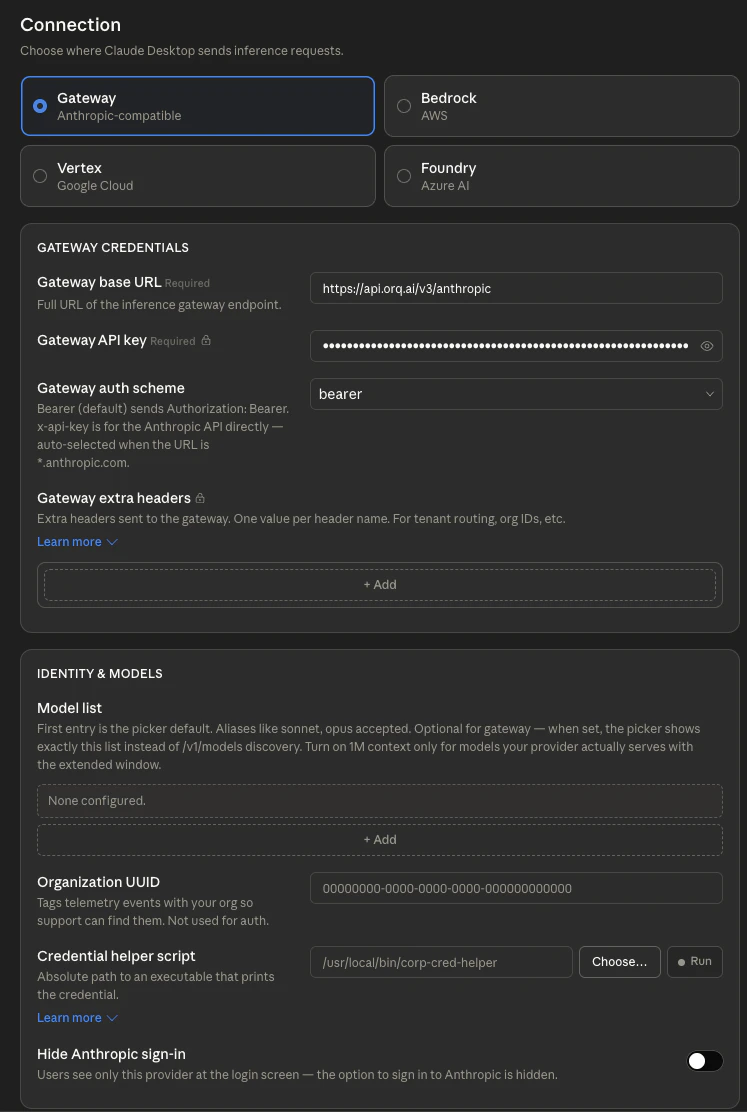

- Select Gateway (Anthropic-compatible)

Enter Gateway Details

| Field | Value |

|---|---|

| Gateway base URL | https://api.orq.ai/v3/anthropic |

| Gateway API key | The Orq.ai API key |

| Gateway auth scheme | bearer |

Add Models (optional)

/v1/models endpoint, so no manual configuration is required. To pin a specific subset, add model slugs using the provider/model-name format:| Model slug | Description |

|---|---|

anthropic/claude-sonnet-4-6 | Claude Sonnet via Anthropic direct |

aws/anthropic/claude-sonnet-4-6 | Claude Sonnet via AWS Bedrock |

google/anthropic/claude-opus-4-7 | Claude Opus via Google Vertex AI |

openai/gpt-4o | GPT-4o for routine tasks |

See Also

API Keys

Supported Models

AI Gateway