Documentation Index

Fetch the complete documentation index at: https://docs.orq.ai/llms.txt

Use this file to discover all available pages before exploring further.

Observability

Instrument your code with OpenTelemetry to capture traces, logs, and metrics for every LLM call, agent step, and tool use.

Observability

Getting Started

LiteLLM provides a unified interface for multiple LLM providers, enabling seamless switching between OpenAI, Anthropic, Cohere, and 100+ other providers. Tracing LiteLLM with Orq.ai gives you comprehensive insights into provider performance, cost optimization, routing decisions, and API reliability across your multi-provider setup.Prerequisites

Before you begin, ensure you have:- An Orq.ai account and API Key

- LiteLLM installed in your project

- Python 3.8+

- API keys for your LLM providers (OpenAI, Anthropic, Cohere, etc.)

Install Dependencies

Configure Orq.ai

Set up your environment variables to connect to Orq.ai’s OpenTelemetry collector: Unix/Linux/macOS:Integrations

Choose your preferred OpenTelemetry framework for collecting traces:LiteLLM

Auto-instrumentation with minimal setup:Examples

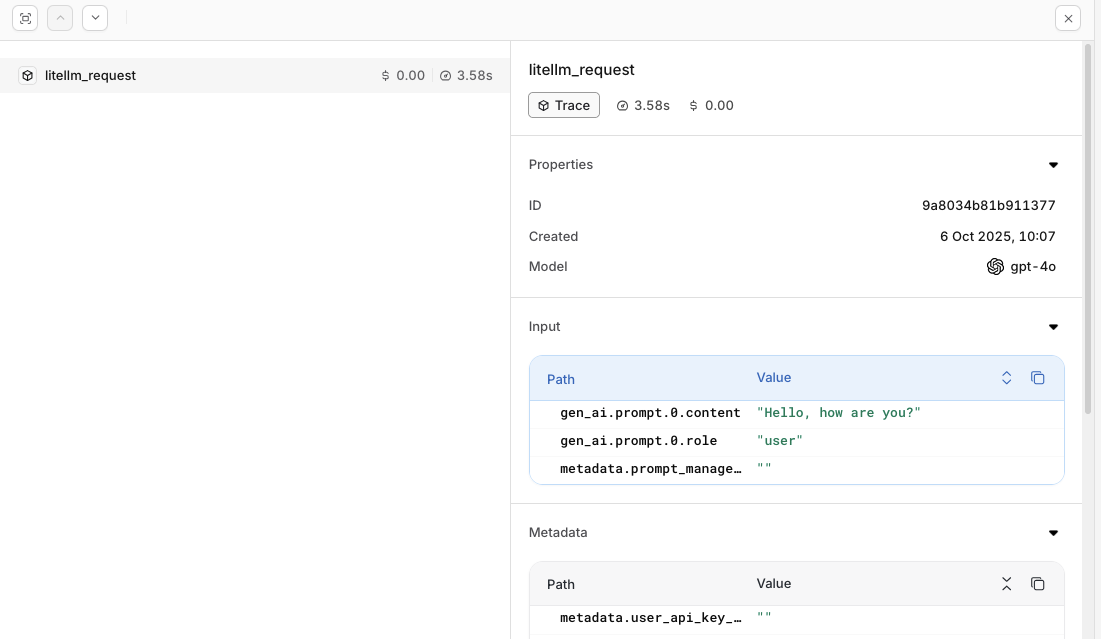

Basic Multi-Provider UsageView Traces

Head to the Traces tab to view LiteLLM traces in the AI Studio.

Evaluations & Experiments

Once your agents are running, use Evaluatorq to score outputs across a dataset and Experiments to compare configurations side-by-side.Run Evaluations with Evaluatorq

Run parallel evaluations across your agents and compare results.

Run Experiments via the API

Compare agent configurations and view results in the AI Studio.