Logs give you a detailed record of every LLM generation made through Deployments and Playgrounds. Use them to monitor successful and failed requests, understand the parameters used, and reproduce issues.Documentation Index

Fetch the complete documentation index at: https://docs.orq.ai/llms.txt

Use this file to discover all available pages before exploring further.

Finding Logs

Logs are available in Deployments and Playgrounds. You can find them within the Logs tab of each respective section.Inside the Logs

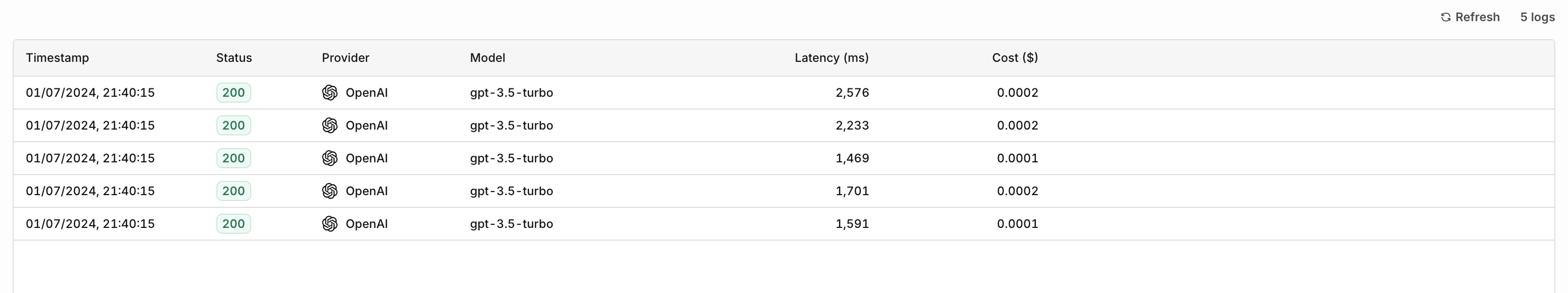

Overview

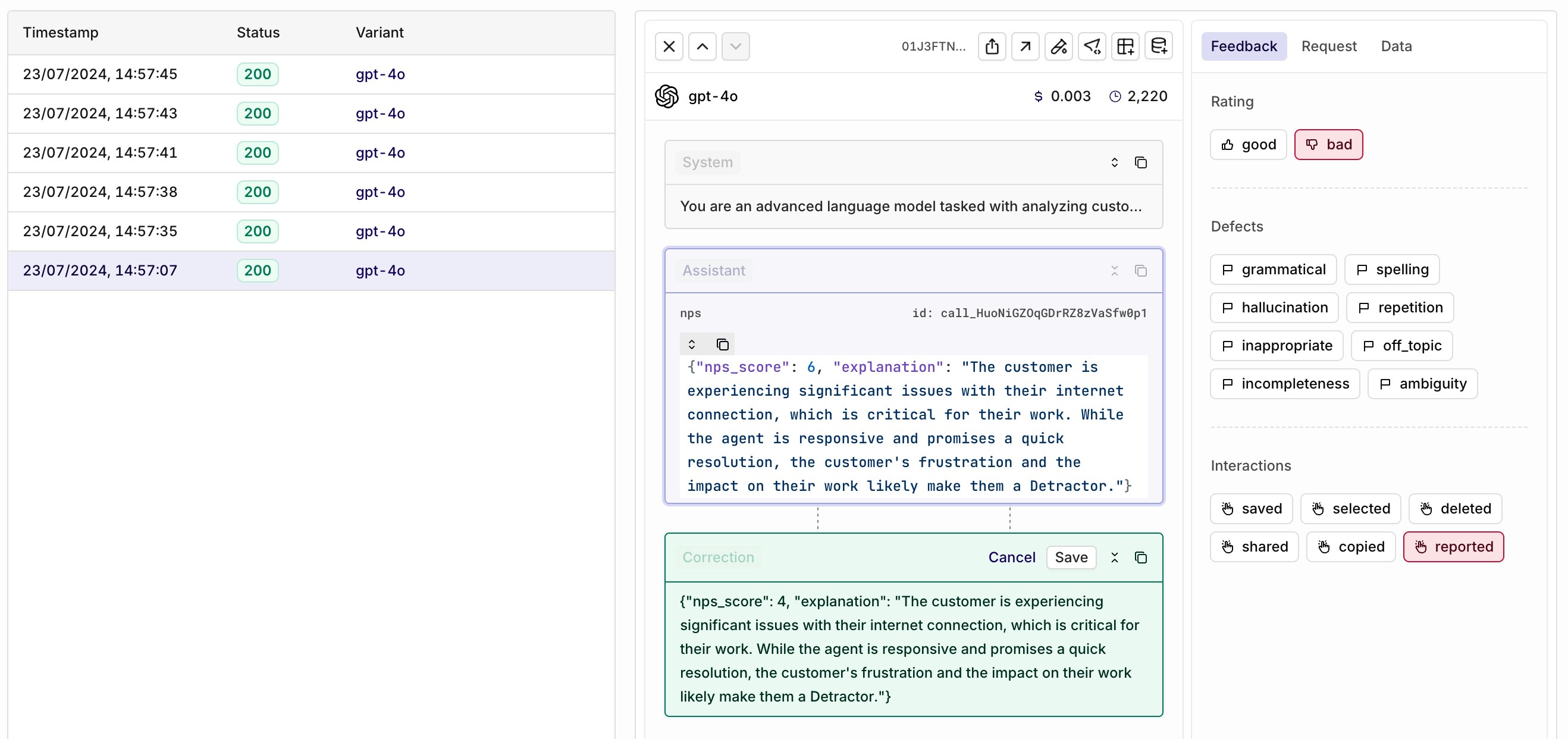

The logs overview lets you see a list ordered by recency of all the logs you can dive into. In this overview you can see the precise timestamp for each log and some details for the log itself, including status, provider, model, latency and cost.

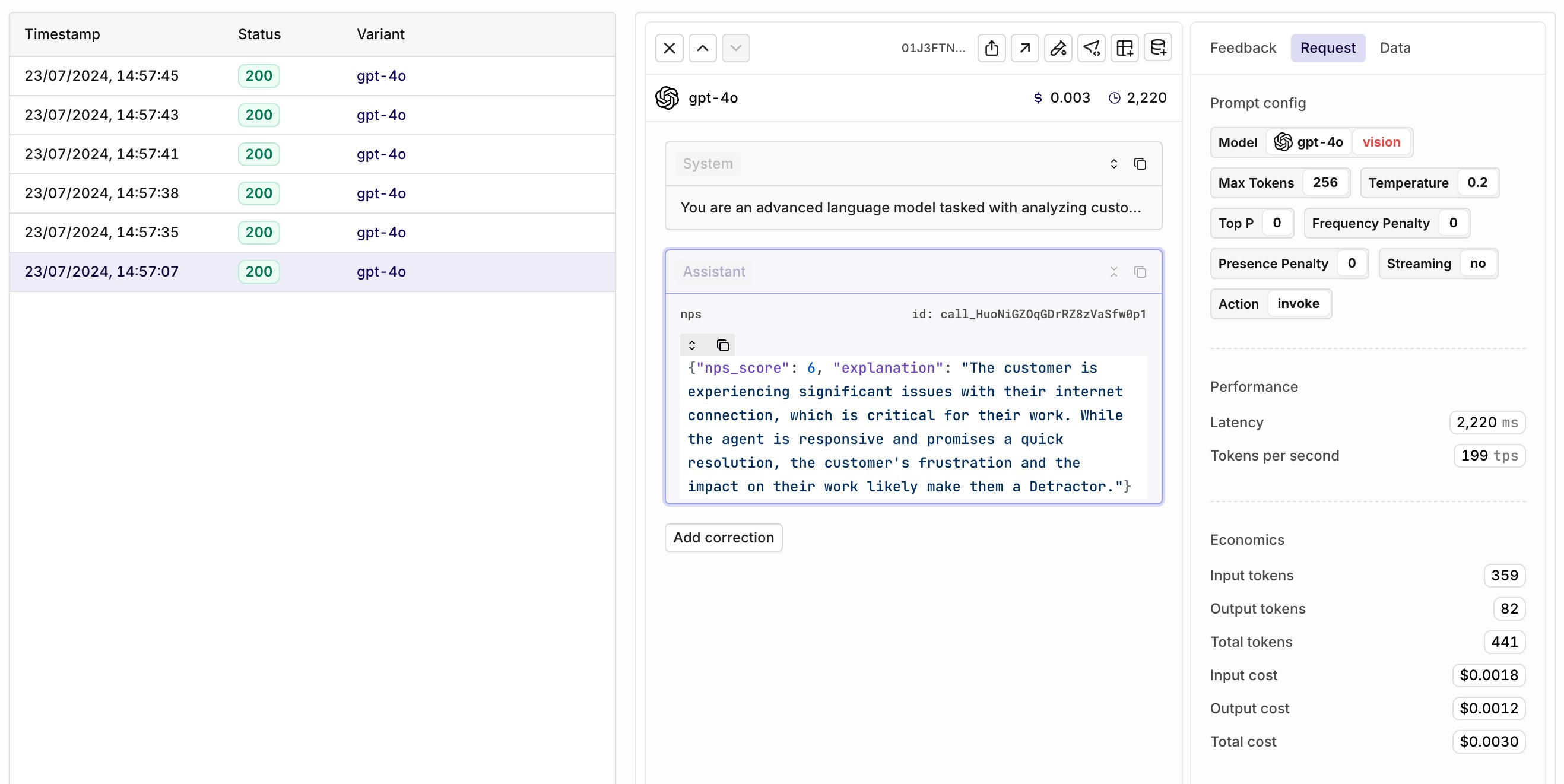

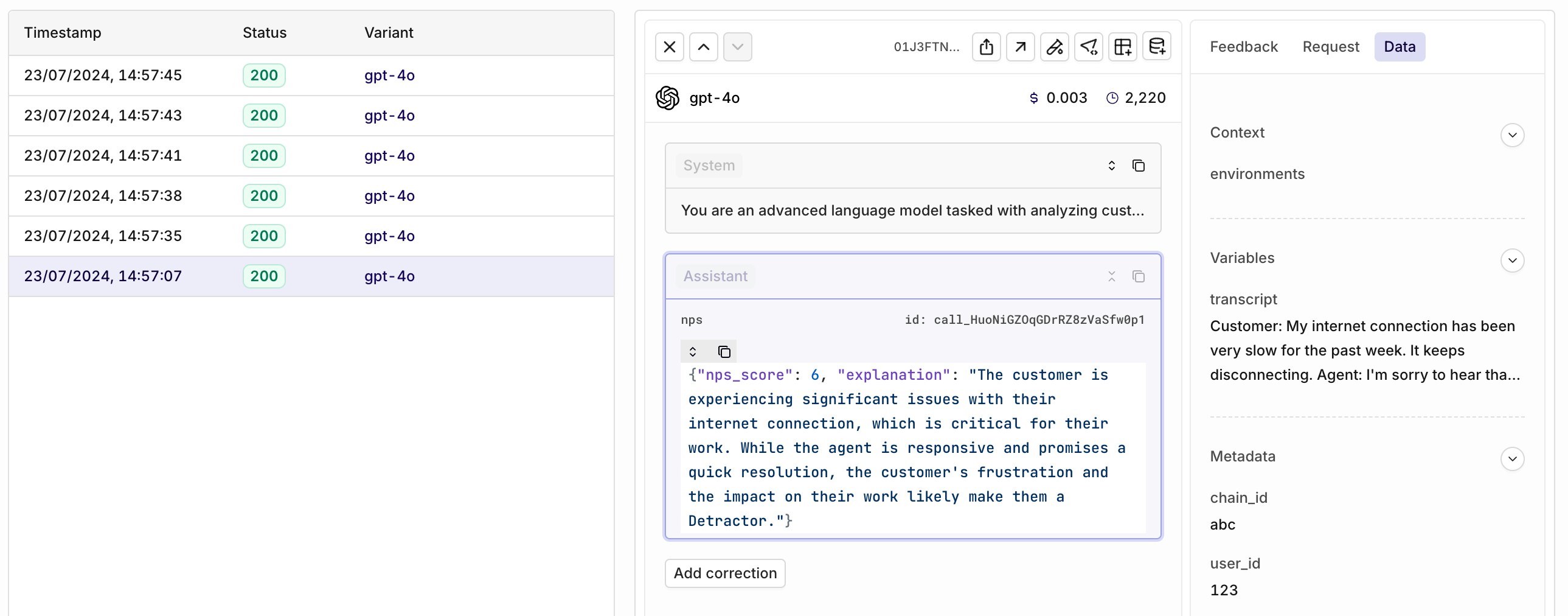

Request

To learn more about the technical request being made, select the Request panel on the right of your log detail. Here you’ll be able to see all model configuration as well as the execution latency and cost of the generation.

Feedback

You can provide feedback on the quality and accuracy of the generations made within the log.

To learn more, see Feedback.

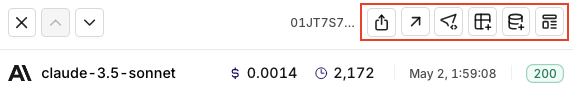

Hyperlinking

When you want to copy the exact same LLM configuration (prompt, model, etc.) to another module within Orq, you can “hyperlink” it. The buttons highlighted in red enable you to:- Share

- Run in Playgrounds.

- Create a Deployment.

- Add Variant to Deployment.

- Add to Dataset.

- Create Prompt.