Annotations are structured key-value pairs that capture human feedback on traces and spans in your observability data. They enable quality assessment, human review workflows, and training dataset curation from human-reviewed traces. Use CasesDocumentation Index

Fetch the complete documentation index at: https://docs.orq.ai/llms.txt

Use this file to discover all available pages before exploring further.

Collecting quality feedback

Collecting quality feedback

Capture thumbs up/down ratings, custom scores, or categorical labels on AI responses. Build a feedback loop that surfaces low-quality generations for review.

Compliance and QA review

Compliance and QA review

Flag responses with specific defects (hallucination, off-topic, inappropriate content) using structured annotation keys shared across your team.

Dataset curation

Dataset curation

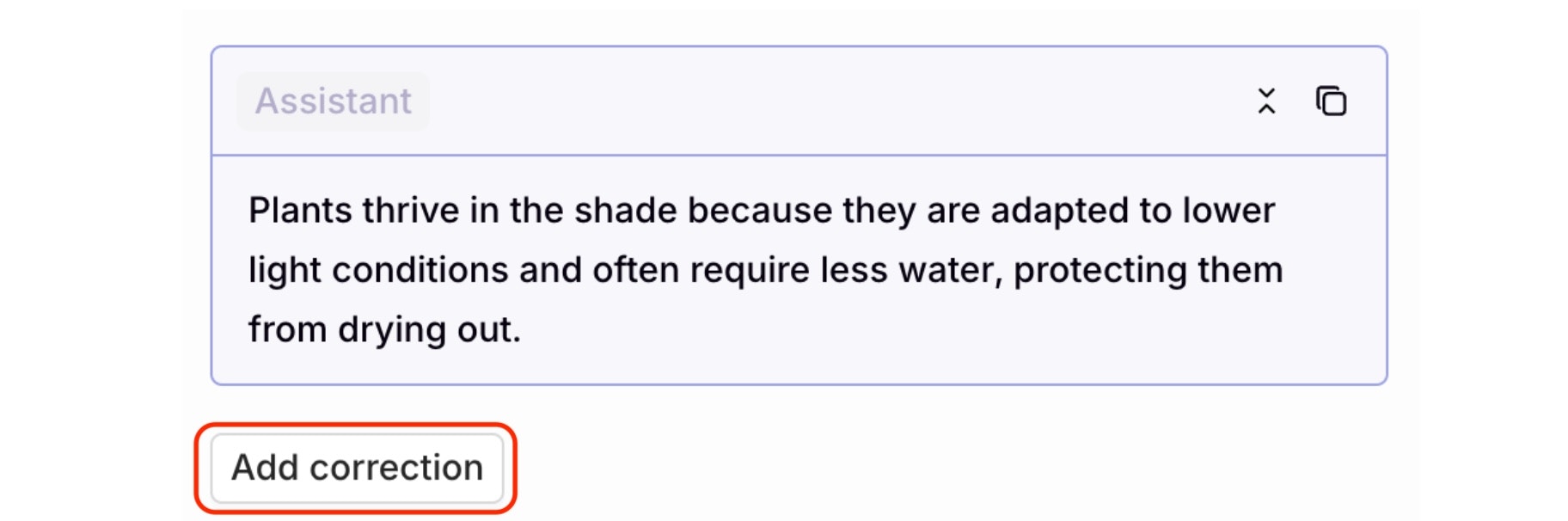

Annotate traces with corrections and quality labels, then export curated subsets as training datasets for future experiments.

Human-in-the-loop workflows

Human-in-the-loop workflows

Route traces to annotation queues for systematic expert review. Combine with Trace Automations to automatically surface traces that meet specific criteria.

- Human Review: defines the schema (key, value type, options) that annotations must conform to

- Annotations: the actual feedback values applied to a trace or span

- Annotation Queues: organized workflows for reviewing traces in bulk via the AI Studio

Human Review

Define annotation schemas: keys, value types, and validation rules. Available on all traces and spans in the project once created.

Annotations

Apply structured human feedback to traces and spans via the AI Studio or programmatically via the API.

Annotation Queues

Organize human review workflows. Filter and present relevant traces for review in bulk.

Create Human Review

Human Reviews define the structure and validation rules for annotations. Each annotation key must match an existing Human Review definition in the project.AI Studio

To create a Human Review, head to Project Settings > Human Review and press the

+ button.

- Categorical: button options with custom labels, such as good/bad or saved/deleted

- Range: a custom scoring slider, for example a scale from 0 to 100

- Open field: free-form text input for detailed comments

Once created, a Human Review is available on all traces and spans in the project. No additional configuration or filtering required.

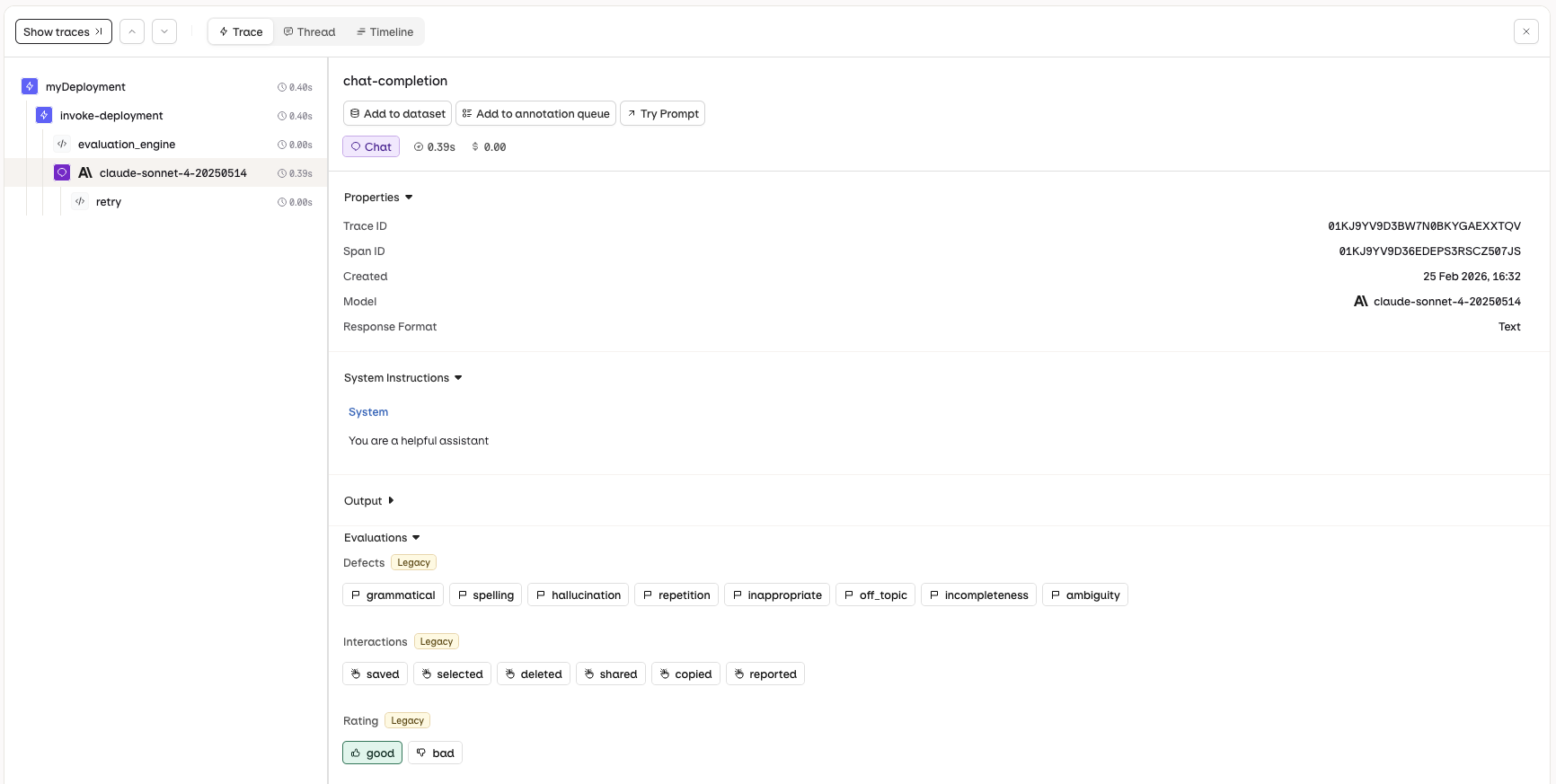

Common Annotation Types Legacy

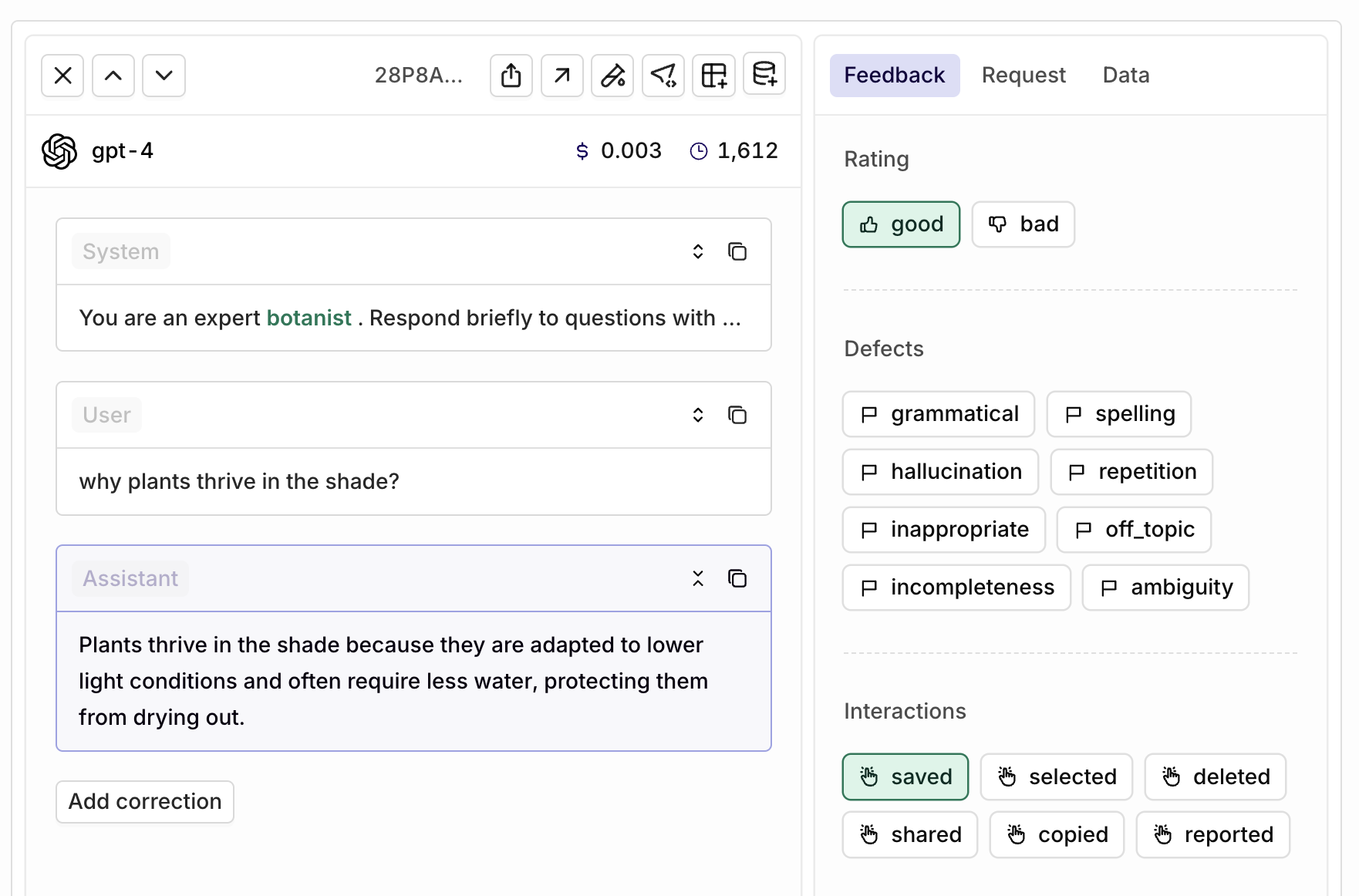

Rating

Rating

Rate the overall quality of AI responses:

| Rating | Description |

|---|---|

| good | The response was helpful and accurate. |

| bad | The response was unhelpful or inaccurate. |

Defects

Defects

Identify specific issues with AI responses:

| Defect | Description |

|---|---|

| grammatical | Responses that contain grammatical errors |

| spelling | Responses that contain spelling errors |

| hallucination | Responses that contain hallucinations or factual inaccuracies |

| repetition | Responses that contain unnecessary repetition |

| inappropriate | Responses that are deemed inappropriate or offensive |

| off_topic | Responses that do not address the user’s query |

| incompleteness | Responses that are incomplete or partially address the query |

| ambiguity | Responses that are vague or unclear |

You can select multiple defects for one response by using an array-type Human Review.

Use Annotations

AI Studio

- API & SDK

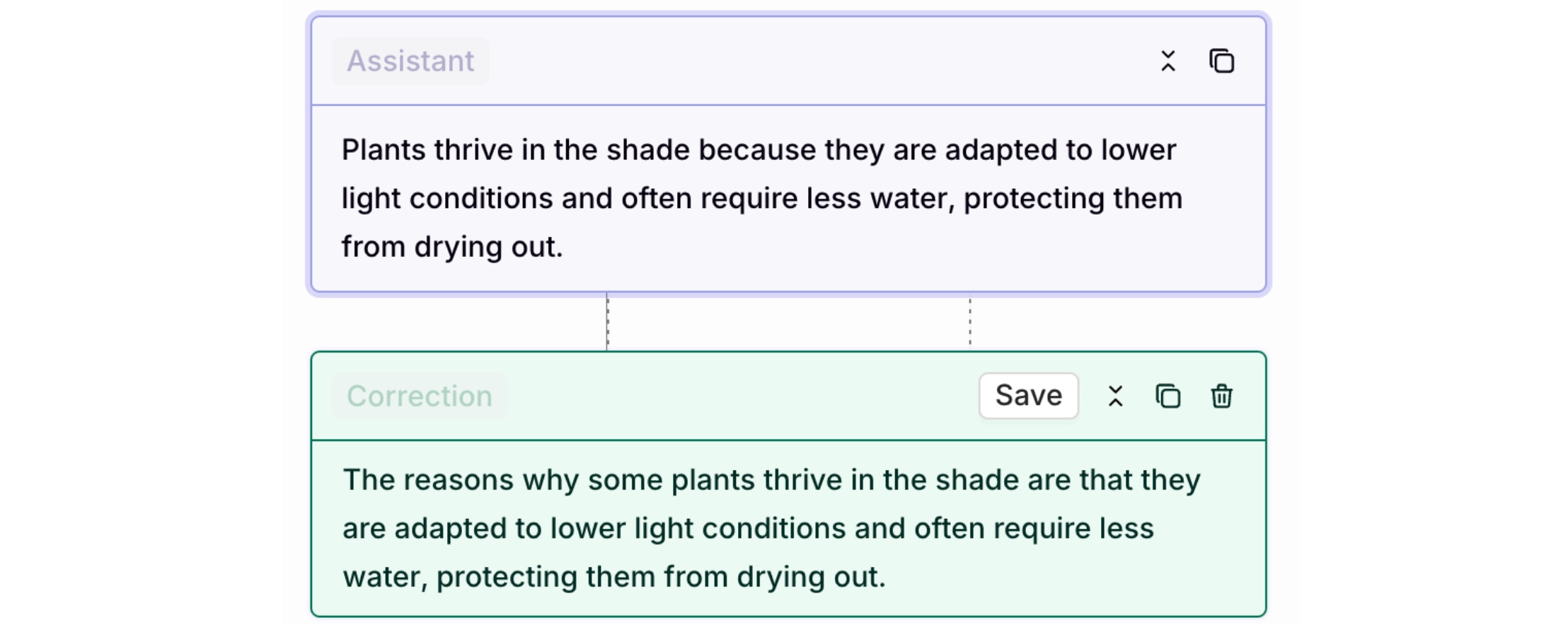

The annotation capabilities differ between Logs and Traces. Logs support both human feedback and corrections, while Traces only support human feedback annotations.

- Traces

- Logs

Navigate to the Traces view and select a single trace. The Annotations panel will be displayed, allowing you to apply human feedback to the AI response.

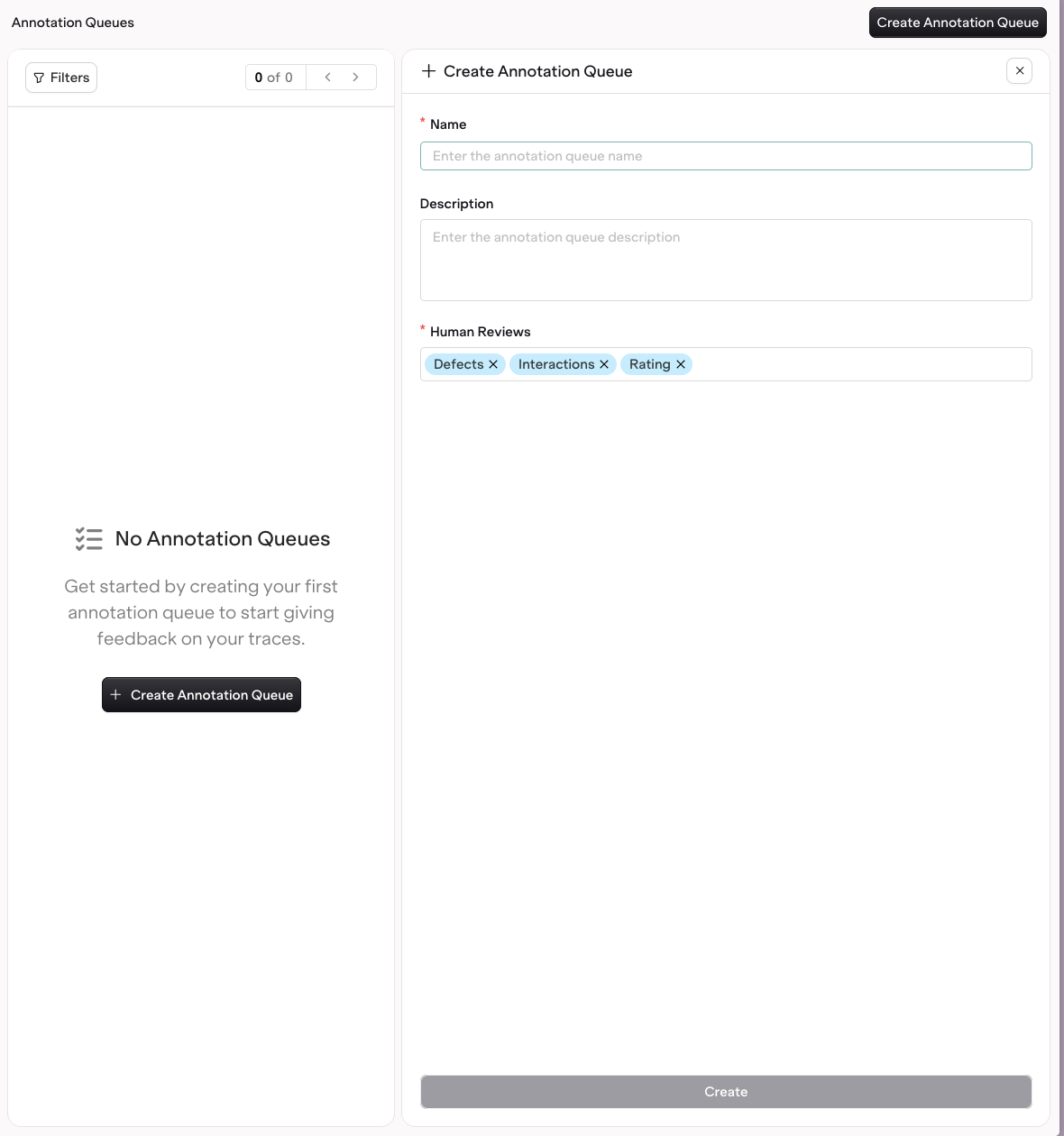

Create Annotation Queues

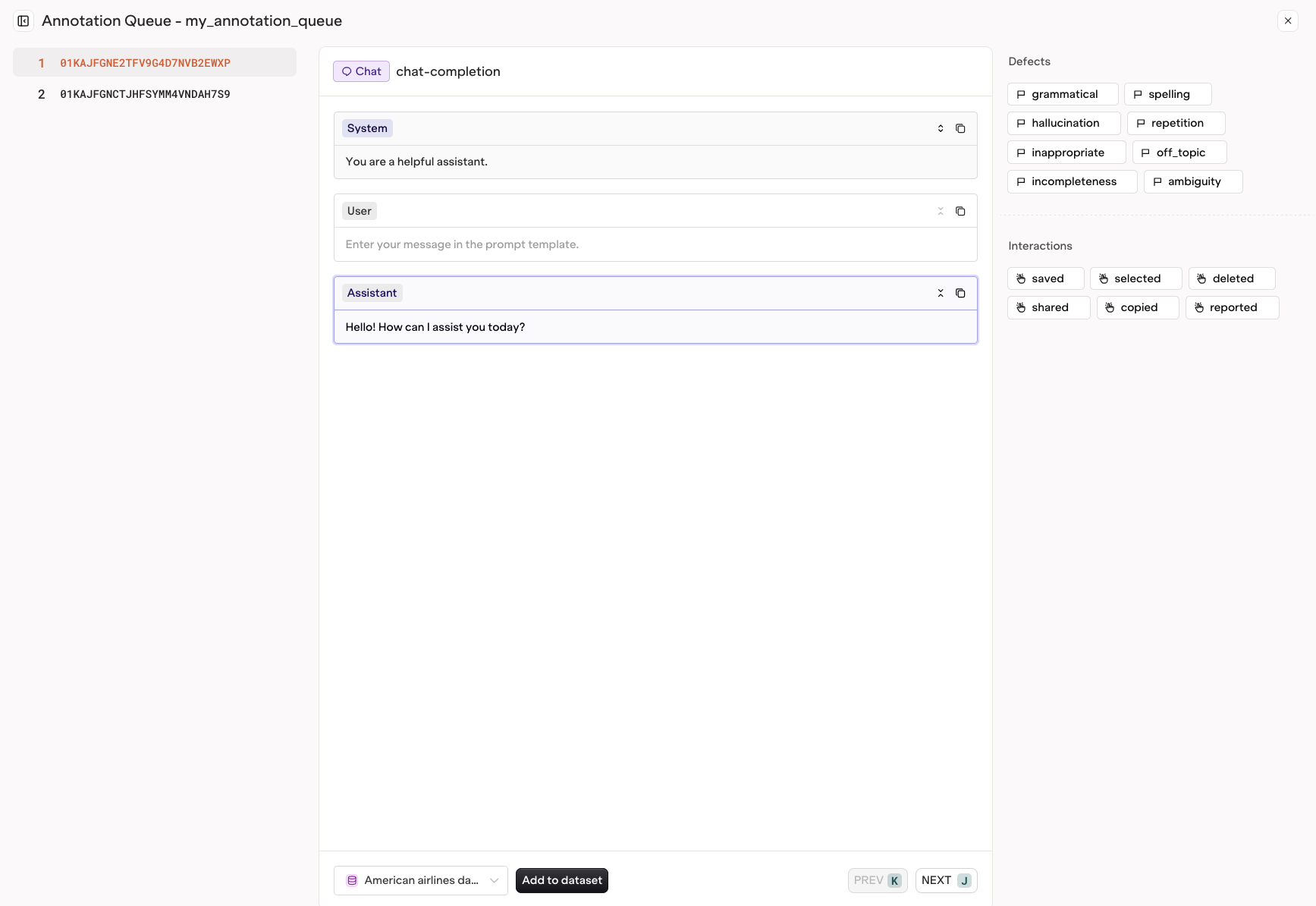

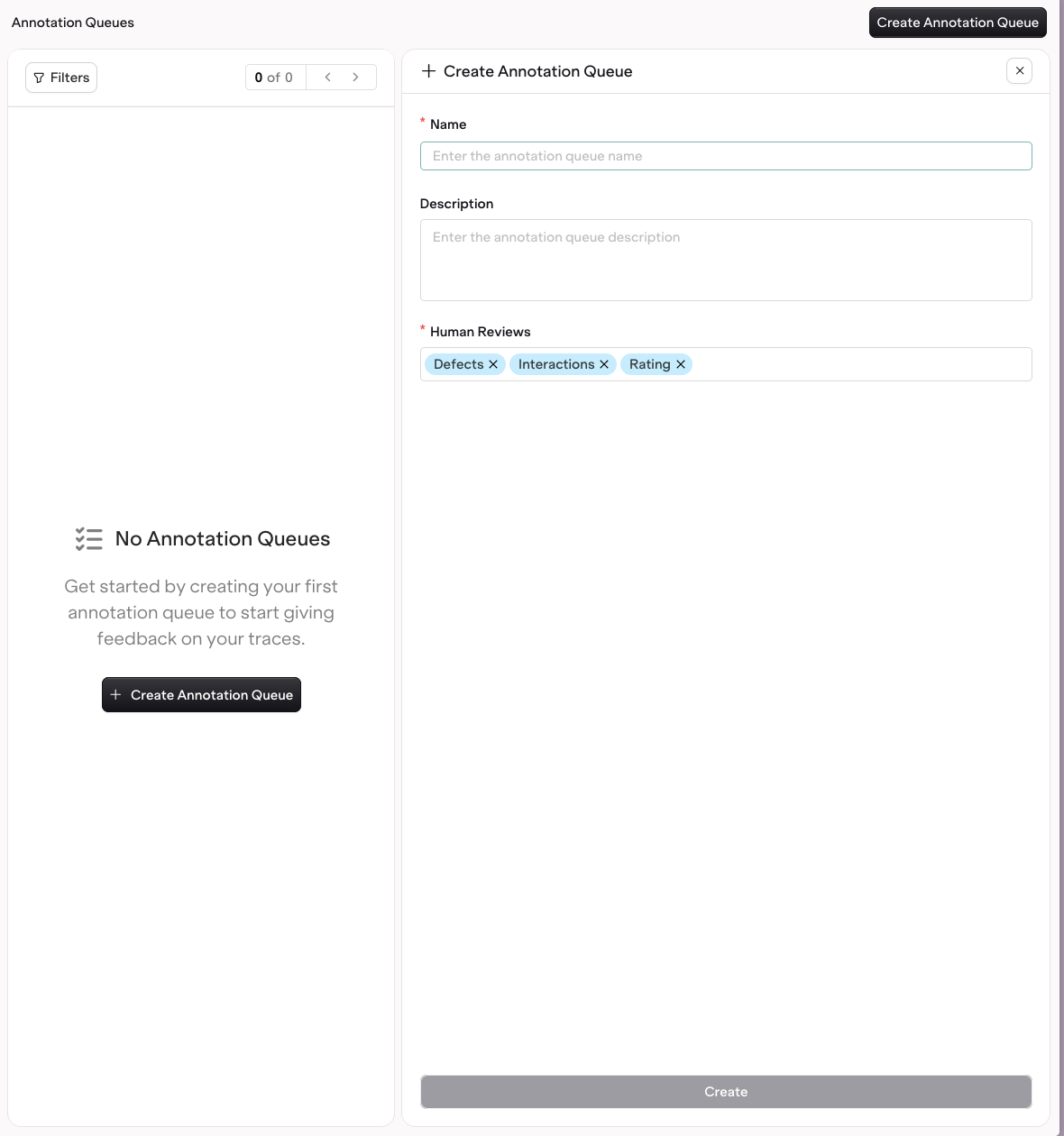

Annotation Queues help you organize and apply Human Reviews effectively to relevant incoming traces.AI Studio

To create an Annotation Queue, head to AI Studio > Annotation Queue.Choose Create Annotation Queue.The following fields are configurable:

- The Name of the queue

- The Description of the Annotation Queue

- The Human Reviews that traces will be reviewed by

Use Annotation Queues

AI Studio

When opening an Annotation Queue, you have easy access to all traces that need feedback.