Documentation Index

Fetch the complete documentation index at: https://docs.orq.ai/llms.txt

Use this file to discover all available pages before exploring further.

Enabling new Models

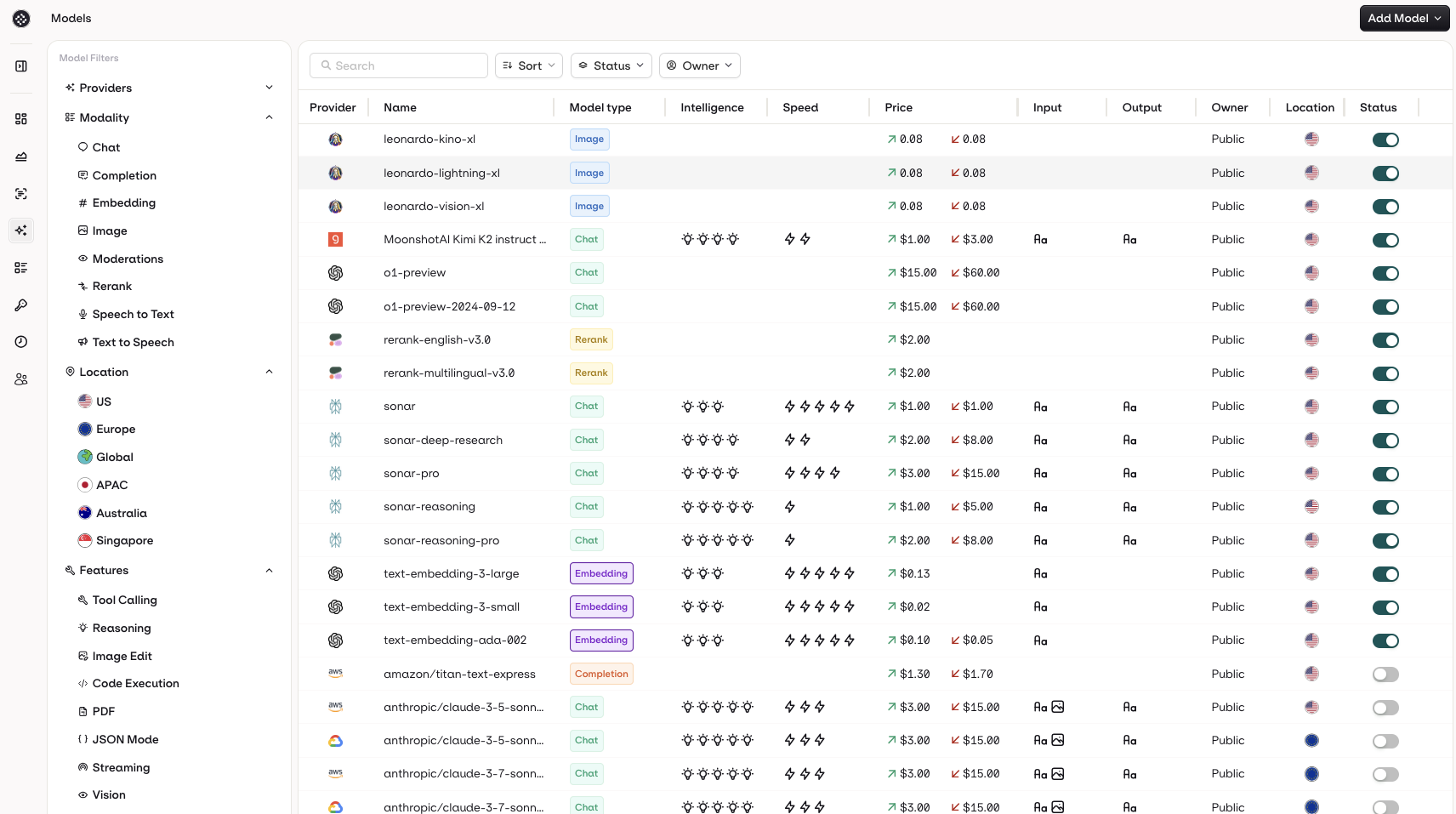

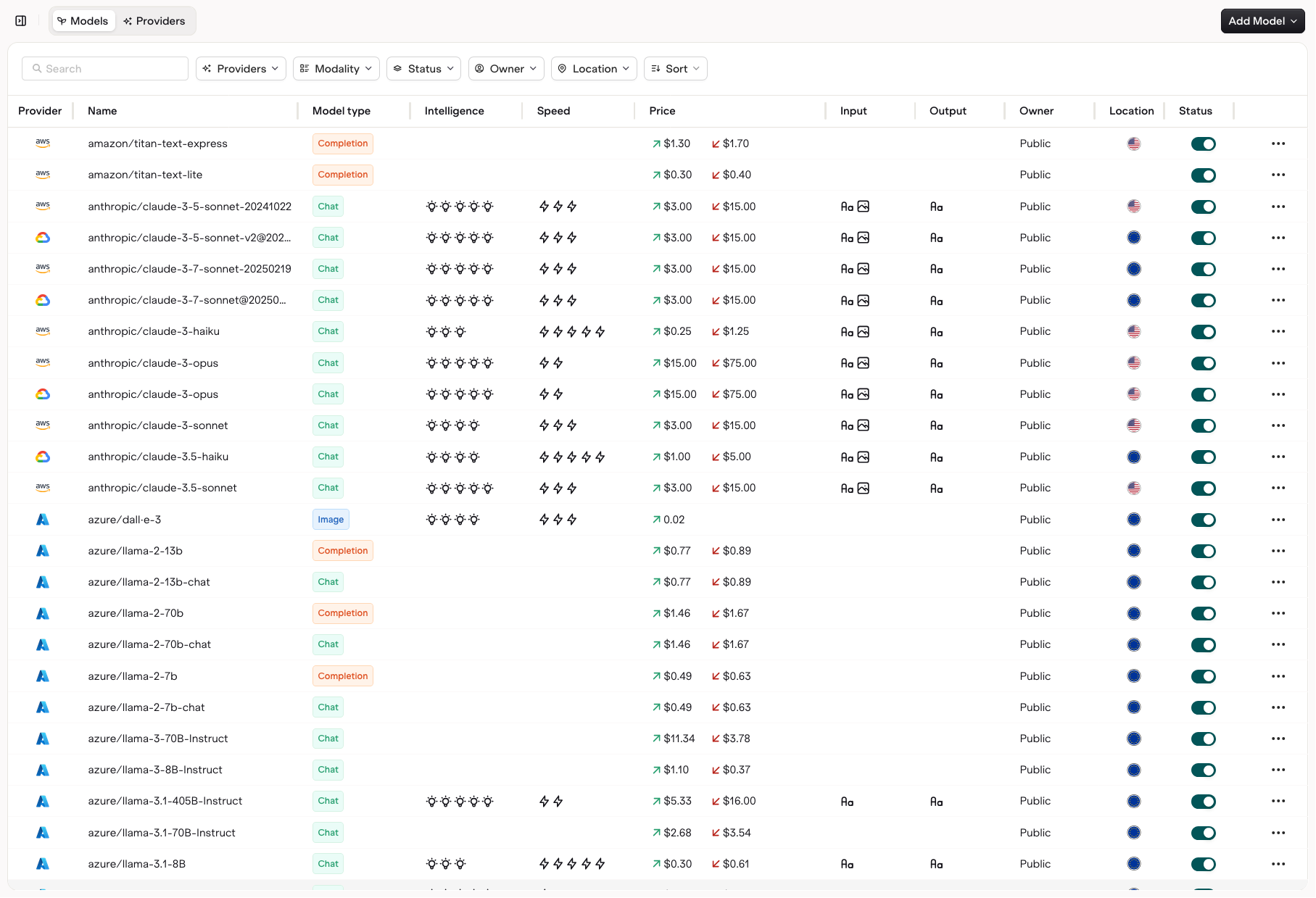

To see your available Models and enable them for use, head to the AI Router section in AI Studio, open the Models page.

You can easily compare models across multiple columns, such as:

- Provider and Model Type — to identify the source and intended use of the model

- Modality — to see whether the model supports text, image, or both for input and output

- Intelligence and Speed Ratings — to quickly assess performance tradeoffs

- Token and Pricing Data — to understand input and output costs

- Region and Release Date — to identify where and when models are available

Use the Status Toggle to Enable a model for use with the AI Router.

Filters

You have access to multiple filters to search models:- Providers let you filter which LLM provider you want to see.

- Model Type lets you decide on which type of model you intend to see (Chat, Completion, Embedding, Rerank, Vision).

- Active lets you filter on enabled or disabled models in your workspace.

- Owner lets you filter between Orq.ai provided models and private models.

- API Key Status lets you filter models for which you have added an API key.

Using your own API keys

To start using models, you have to bring your own keys, head to the Providers tab to use your own API keys with the supported providers.Onboarding Private Models

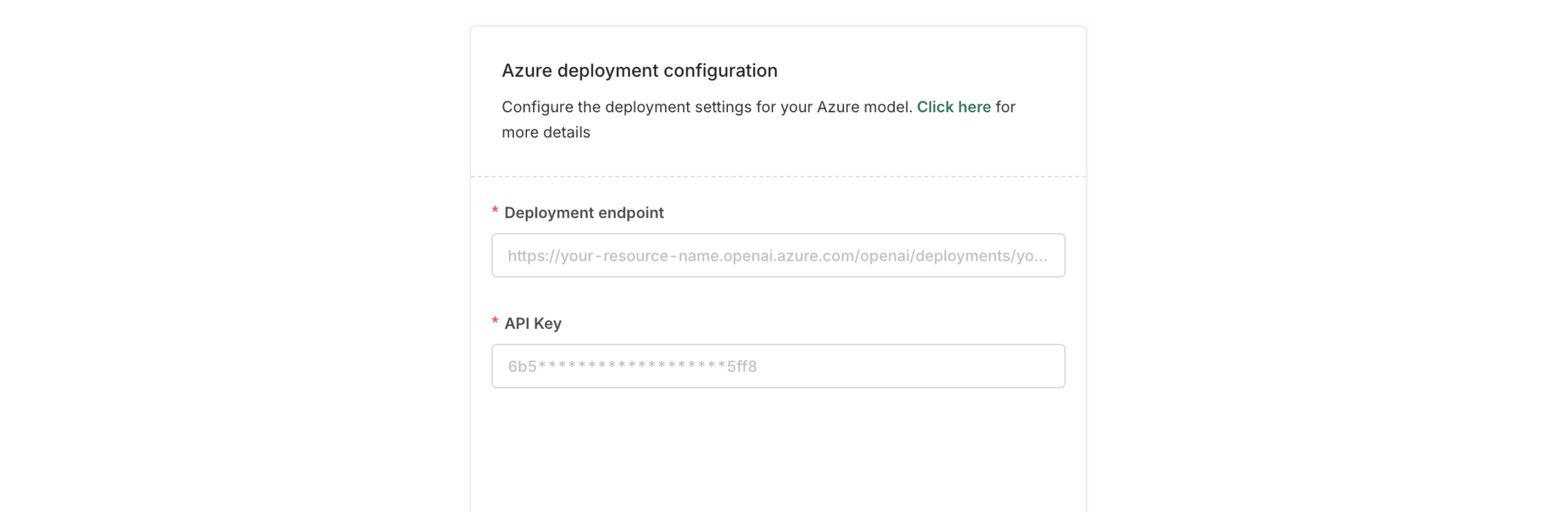

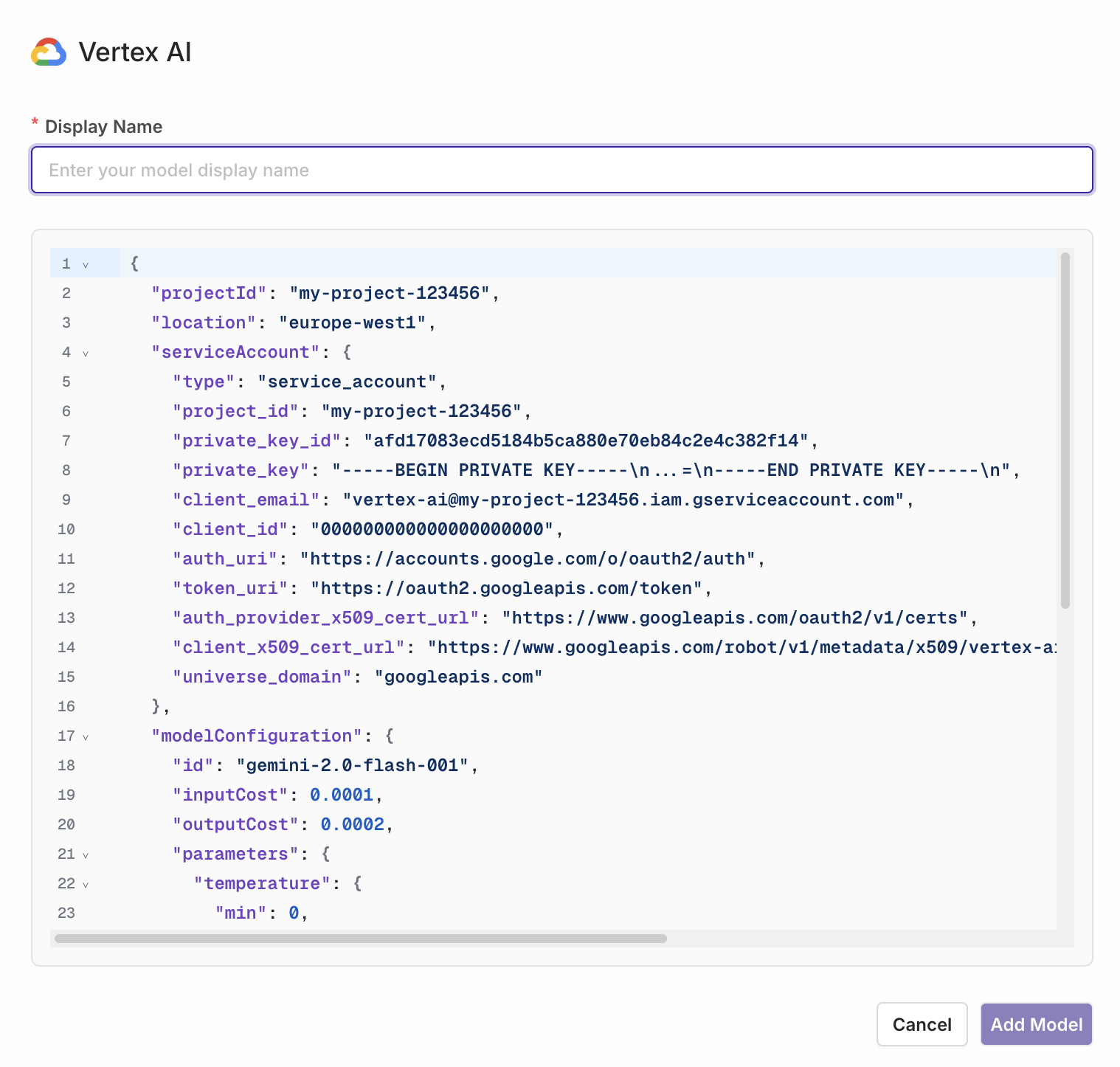

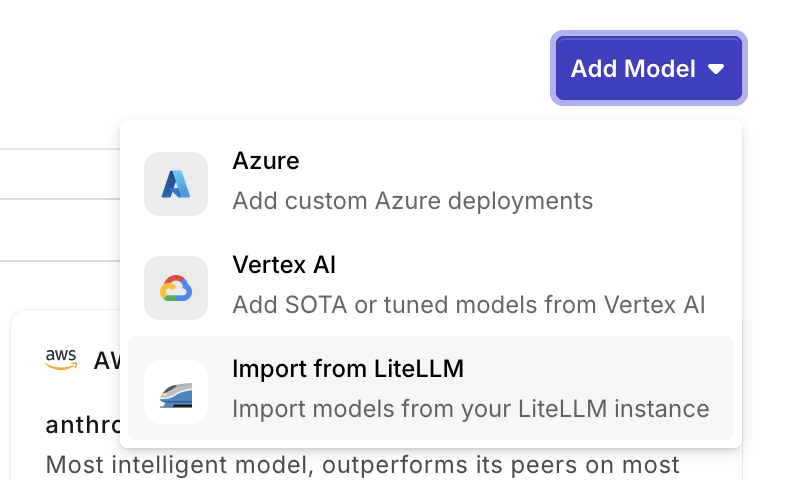

You can onboard private models by choosing Add Model at the top-right of the screen. This can be useful when you have a model fine-tuned you want to use.Private Models Providers

Referencing Private Models in Code

When referencing private models through our SDKs, API or Supported Libraries, the model is referenced by the following string:<workspacename>@<provider>/<modelname>.

Example: corp@azure/gpt-4o-2024-05-13

Auto Router

Auto Router

Route requests automatically between a Strong Model and an Economical Model based on task complexity. See the Auto Router page for setup, profiles, and recommended model pairs.