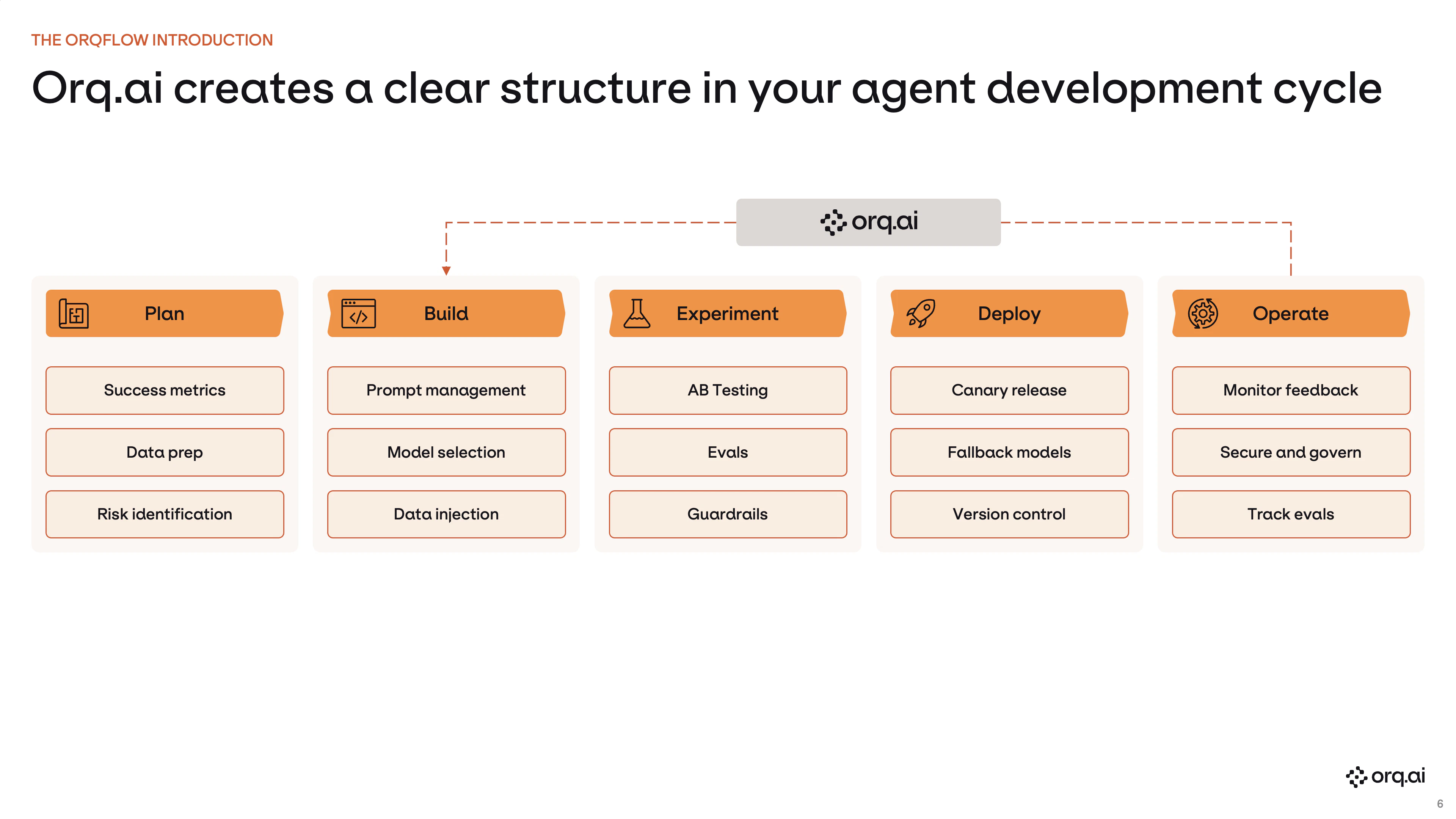

orq.ai organizes AI development into five connected stages. Each stage has a clear owner, feeds into the next, and the later stages loop back, creating a continuous improvement cycle for your AI applications.Documentation Index

Fetch the complete documentation index at: https://docs.orq.ai/llms.txt

Use this file to discover all available pages before exploring further.

Plan

Product Manager The most important thing to define upfront is what quality means for your feature. LLM outputs are probabilistic: there is no single right answer, so success criteria must be concrete enough to measure. The quality bar you set here becomes the foundation for the evaluators you write, the datasets you curate, and the experiments you run both before and after production. What you’ll do:- Define the intended behavior and the user interactions the feature must handle

- Specify measurable quality criteria (tone, accuracy, format, safety, etc.)

- Scope the work into Projects and invite Members with the right roles

Build

Full Stack Engineer Subject Matter Expert Engineers implement the application scaffolding; subject matter experts own the natural language layer: the system instructions, prompt structure, and retrieval configuration that drive model behavior. What you’ll do:- Author and iterate on prompts in the Playground

- Configure Agents for multi-step workflows

- Connect Knowledge Bases for retrieval-augmented generation

- Version prompt configurations in AI Studio independently of application code

Experiment

AI Engineer Data Scientist Validate configuration quality at scale before shipping. Run a representative input set against one or more configurations and score each output against the quality criteria defined in Plan. What you’ll do:- Curate representative Datasets of real or synthetic inputs

- Run configurations side by side in Experiments

- Score outputs automatically with Evaluators (LLM-as-a-Judge, Python, or human review)

Deploy

AI Engineer Full Stack Engineer Publish a validated configuration to production. Model, instructions, and parameters can be updated from the Studio without a code change or redeployment, keeping iteration cycles short once the feature is live. What you’ll do:- Publish prompt and agent configurations as versioned Deployments

- Route traffic across models with fallbacks, load balancing, and cost controls via the AI Router

Operate

AI Engineer Data Scientist Every request is captured automatically. Use production signal to understand real behavior, catch regressions, and continuously improve, closing the loop back into Experiment. What you’ll do:- Inspect requests step by step in Traces

- Monitor agent-level performance and step timelines in Control Tower

- Label production spans with Annotations to build evaluation datasets from real traffic

- Track cost, error rates, and model usage trends in Analytics